How to design a distributed ID counter?

Hello everyone, I'm Brother Shu.

In a complex distributed system, it is often necessary to uniquely identify a large amount of data and messages, such as the ID primary key of sub-database and sub-table, the request ID of distributed tracing, and so on.

Therefore, designing a "distributed ID number generator" has become a very common system design problem. Today I will take you to learn how to design a distributed ID number generator.

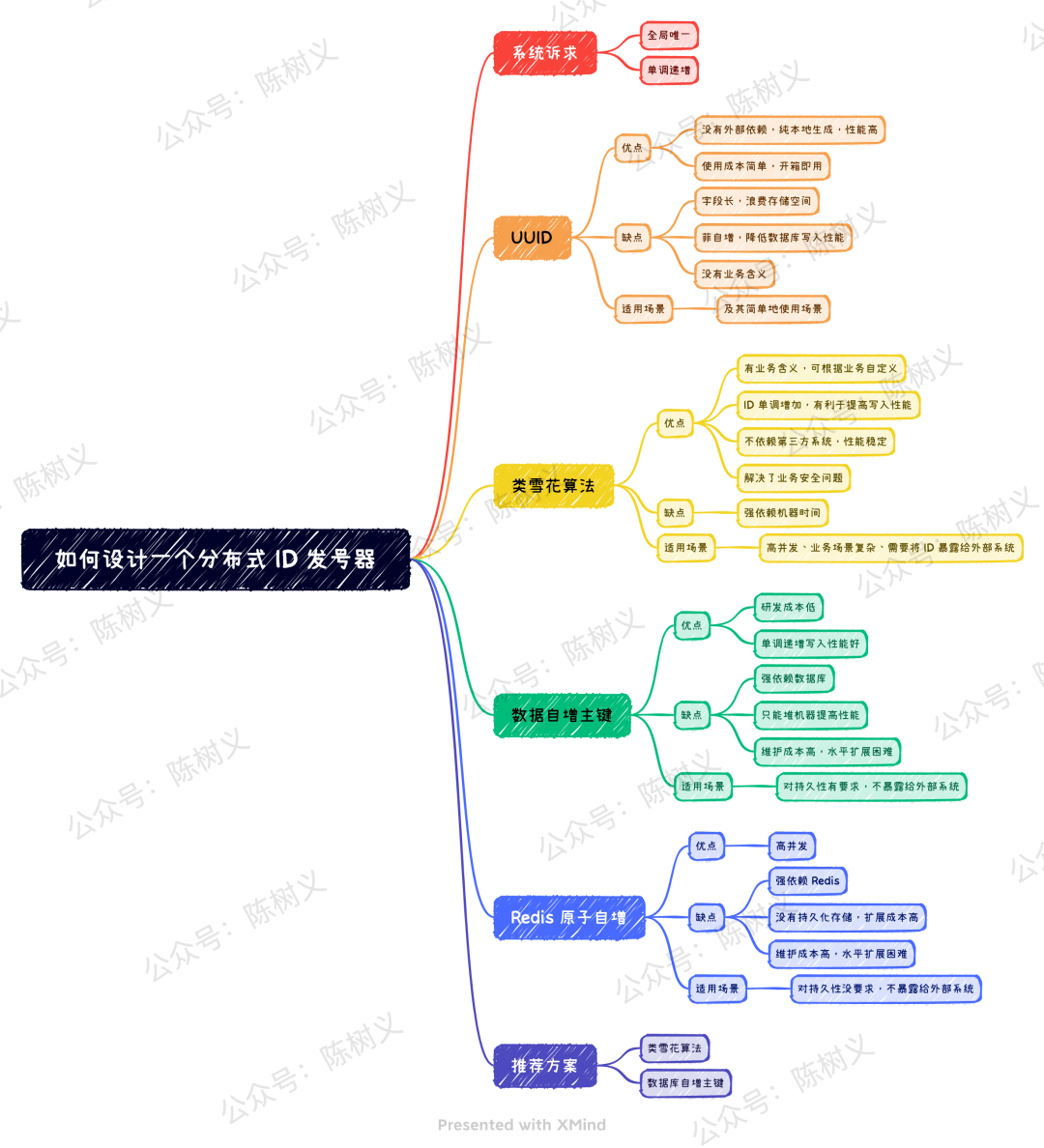

Article Mind Map

System requirements

For business systems, there are generally the following requirements for globally unique IDs:

- Globally unique. The generated ID cannot be repeated. This is the most basic requirement. Otherwise, a primary key conflict will occur in the scenario of sub-database and sub-table.

- Monotonically increasing. Ensure that the next ID is greater than the previous ID, which can ensure sequential writing when writing to the database and improve writing performance.

For the above two requirements, the first point is required by all systems. The second point is not required by all systems. For example, the request ID of distributed tracing does not need to be monotonically increasing. For those scenarios that need to be stored in the database as an ID gradually, it may be necessary to ensure that the globally unique ID is monotonically increasing.

In addition, we may also need to consider security aspects. If a globally unique ID is sequentially incremented, it may cause business information leakage. For example, if the order ID is incremented by 1 each time, the competitor can know the number of our daily orders directly through the order ID, which is unacceptable for the business.

For the above demands, there are many unique ID solutions on the market, among which the most common solutions are as follows:

- UUID

- Snowflake-like algorithm

- Database auto-incrementing primary key

- Redis atomic increment

UUID

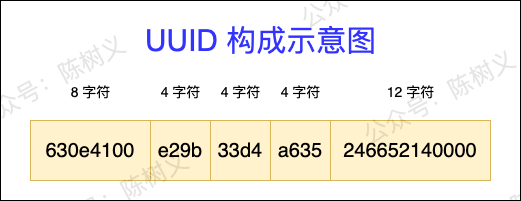

The full name of UUID is Universally Unique Identifier, which is a globally unique identifier, which is an API that comes with Java. A standard UUID contains 32 hexadecimal numbers, which are divided into 5 segments with the dash as a separator, each segment is 8 characters, 4 characters, 4 characters, 4 characters, 12 characters in length, and the size is 36 characters, as shown in the figure below. A simple UUID example: 630e4100-e29b-33d4-a635-246652140000.

UUID composition diagram

For the unique ID solution such as UUID, the advantage is that there is no external dependency, and it is purely locally generated, so its performance is very high. But the disadvantages are also very obvious:

Fields are very long and waste storage space. UUID is generally 36 strings in length. If it is stored as the primary key of the database, the storage space of the index will be greatly increased.

Non-auto-increment, reducing database write performance. UUID is not self-incrementing. If it is used as the primary key of the database, sequential writing cannot be achieved, which will reduce the database writing performance.

No business implication. UUID has no business meaning, we can't get any meaning from UUID.

Therefore, for UUID, it is more suitable for non-database ID storage, such as generating a local distributed tracing request ID.

Snowflake-like algorithm

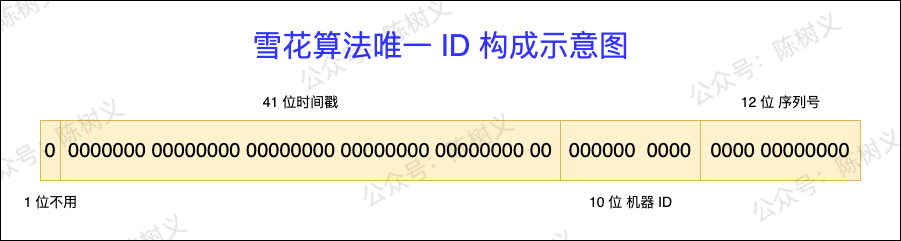

Snowflake algorithm (SnowFlake) is Twitter's open source distributed ID generation algorithm. The idea is to use 64 bits to represent an ID and divide the 64 bits into 4 parts, as shown in the following figure.

Snowflake algorithm unique ID composition diagram

- First part: 1 bit. Fixed to 0, represented as a positive integer. The highest bit in binary is the sign bit, 1 for negative numbers and 0 for positive numbers. IDs are all positive integers, so they are fixed at 0.

- Second part: 41 bits. Represents a timestamp, accurate to the millisecond, and can be used for 69 years. Timestamps have an auto-increment property.

- The third part: 10 bits. Indicates a 10-bit machine ID, and supports up to 1024 nodes. This part can also be split into 5-digit datacenterId and 5-digit workerId, where datacenterId represents the computer room ID, and workerId represents the machine ID.

- Part 4: 12 bits. Indicates serialization, that is, the self-incrementing ID of some columns, which can support the generation of up to 4095 ID serial numbers for the same node in the same millisecond.

The advantages of the snowflake algorithm are:

- Has business meaning and is customizable. Each digit of the ID of the snowflake algorithm has a special meaning, and we can infer the corresponding meaning from the different digits of the ID. In addition, we can also add or delete the number of bits in each part according to our own needs, so as to implement a custom snowflake algorithm.

- The ID increases monotonically, which is beneficial to improve the write performance. The last part of the ID of the snowflake algorithm is an incremental serial number, so the generated ID is incremental, and when it is used as the database primary key ID, sequential writing can be achieved, thereby improving the writing performance.

- Does not rely on third-party systems. The generation method of the snowflake algorithm does not rely on third-party systems or middleware, so its stability is high.

- Security issues are resolved. The IDs generated by the Snowflake algorithm are monotonically increasing, but the increment step size is not deterministic, so the number of generated IDs cannot be inferred from the difference in IDs, thus protecting business privacy.

Snowflake algorithm can be almost perfect, but it has a fatal disadvantage - strong dependence on machine time. If the system time on the machine is dialed back, that is, the time is slower than the normal time, there may be a situation where the number is repeated.

For this case, we can maintain a file locally, write the last timestamp, and then compare with the current timestamp. If the current time stamp is smaller than the last time stamp, there is a problem with the system time, and it should be dealt with in time.

Overall, the Snowflake algorithm is not only shorter in length, but also has business implications, and can improve write performance in database storage scenarios. Therefore, the Snowflake algorithm to generate distributed unique IDs is welcomed by everyone.

At present, the implementation of open source signal generators of many domestic manufacturers is based on the snowflake algorithm, such as: Baidu's open-source UidGenerator, Meituan's open-source Leaf, and so on. The core of these snowflake-like algorithms is to divide 64 bits more reasonably, making them more suitable for their own scenarios.

Database auto-incrementing primary key

Speaking of unique IDs, we naturally think of the self-incrementing primary key of the database, because it is unique.

In the case of low concurrency, we can directly deploy one machine, insert a piece of data into the database table every time we get the ID, and then return the primary key ID.

The advantage of this approach is that it is very simple and low cost to implement. In addition, the generated unique ID is also monotonically increasing, which can meet the requirements of database write performance.

But its shortcomings are also very obvious, that is, its strong dependence on the database. When the database is abnormal, it will cause the entire system to be unavailable. Even if high-availability switching is performed, when the data synchronization is inconsistent during master-slave switching, it may still cause repeated numbering.

In addition, because it is a single-machine deployment, its performance bottleneck is limited to the read and write performance of a single MySQL machine, and it is destined to be unable to undertake high-concurrency business scenarios.

For the performance problems mentioned above, we can solve them through cluster deployment. The ID conflict problem after cluster deployment can be solved by setting the incremental step size. For example, if we have 3 machines, then we set the increment step to 3, and the ID generation strategy for each machine is:

- The first machine, starting from 0 and increasing with a step size of 3, generates IDs: 0, 3, 6, 9, and so on.

- The second machine, starting from 1 and increasing with a step size of 3, generates IDs: 1, 4, 7, 10, and so on.

- The third machine, starting from 2 and increasing with a step size of 3, generates IDs: 2, 5, 8, 11, and so on.

This method solves the problem of cluster deployment and ID conflict, and can improve the capacity of concurrent access to a certain extent. But its disadvantages are also more obvious:

Only rely on heap machines to improve performance. When the requests increase again, we can only heap machines indefinitely, which seems to be a physical defense.

Difficulty scaling horizontally. When we need to add a machine, its processing is very troublesome. First of all, we need to deploy the new server first, set a new step size, and set the starting value to an impossible value. After the newly added servers are deployed, the old servers are dealt with one by one. This process is really painful. It can be said that it is human flesh operation and maintenance.

Redis atomic increment

Since Redis is an in-memory database, its powerful performance is very suitable for high-concurrency distributed ID generation. To implement self-incrementing ID based on Redis, it mainly uses the INCR command in Redis. This command can increment a number by one and return the result, and this operation is an atomic operation.

The distributed ID function is realized through Redis, and its mode is similar to that of self-incrementing ID through the database, except that the storage medium is changed from hard disk to memory. When a single Redis cannot support concurrent requests, Redis can also be solved by cluster deployment and setting the step size.

However, there is a problem with the self-incrementing primary key of the database, and the method of Redis self-incrementing ID also has the same problem, that is, it can only heap machines, and it is difficult to scale horizontally. In addition, compared to the persistence of database storage, Redis is a memory-based storage, and the problem of persistence needs to be considered, which is also a headache.

Summarize

After seeing so many solutions for distributed ID, which one should we choose?

When we are making decisions, we should determine the dimensions of the decision. For this problem, the dimensions we should pay attention to are roughly: R&D cost, concurrency, performance, and operation and maintenance cost.

First of all, for UUID, it is not as good as the snowflake algorithm in all aspects. The only advantage is that the JDK has its own API. Therefore, if you just use it very simply, you can use UUID directly. After all, the snowflake algorithm has to write the implementation code.

Secondly, for the snowflake-like algorithm, it is undoubtedly a very good implementation. Compared with UUID, it not only has the advantages of locally generated UUID and does not rely on third-party systems, but also has business implications, can improve writing performance, and solve security problems. But its disadvantage is that it needs to implement the code of the snowflake algorithm, so its development cost is slightly higher than that of UUID.

Finally, for the two methods of database auto-increment ID and Redis atomic auto-increment. The advantage of the method of automatically incrementing the ID of the database is also that it is simple and convenient, and does not require too high research and development costs. However, the disadvantage is that the supported concurrency is too low, and the subsequent operation and maintenance costs are too high. Therefore, the method of automatically incrementing the ID of the database should be suitable for small-scale usage scenarios. The Redis atomic auto-increment method has priority to support high concurrency scenarios. But the disadvantage is that it needs to deal with the persistence problem by itself, and the operation and maintenance cost may be relatively high.

I prefer the database auto-increment method. Both ways are very similar, the only difference is the storage medium. The atomic auto-increment method of Redis is very fast, and a single machine may be several times faster than the database method. But if you want to consider the problem of persistence, it is too complicated for Redis.

Let's sort out the above four implementations and summarize them into the following comparison table:

Implementation plan | advantage | shortcoming | scenes to be used |

UUID | High performance, independent of third parties, low R&D cost | The long field wastes storage space, the ID does not auto-increment, the writing performance is poor, and there is no business meaning | Very simple usage scenario (for simple testing) |

Snowflake-like algorithm | Has business meaning, monotonically increasing write performance, does not depend on third parties, and has business security | Strong dependence on machine time | High concurrency, complex business scenarios, and need to expose IDs to external systems |

Database auto-increment ID | Low R&D cost, good monotonically increasing write performance | Depends on database, can only heap machines to improve performance, high maintenance cost | Requires persistence, not exposed to external systems |

Redis atomic increment | High concurrency, monotonically increasing write performance | Depends on Redis, has business problems, has persistence problems | No requirement for persistence, not exposed to external systems |

In general, if you consider long-term use, then operation and maintenance costs and high concurrency must be considered. Under this basic condition, the snowflake-like algorithm and database auto-increment ID may be relatively good choices.

References

- Some Thoughts on the Global Number Generator of Distributed Systems - Nuggets

- VIP! ! very good! Leaf——Meituan Dianping Distributed ID Generation System - Meituan Technical Team

- (8 messages) Six ways to implement a globally unique number generator - Beihe M's Blog - CSDN Blog

- VIP! ! Yes, expand your horizons! 10 | Issuer: How to ensure the global uniqueness of ID after sub-database and sub-table?