Using the TCP protocol, will there be no packet loss?

Packet sending process

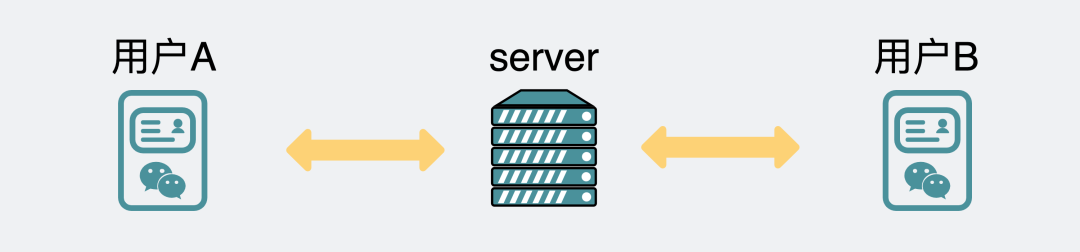

First of all, the green skin chat software client of our two mobile phones, to communicate, will pass through their home server in the middle. Probably so long.

Chat software three-terminal communication

Chat software three-terminal communication

But in order to simplify the model, we omit the intermediate server, assuming this is an end-to-end communication. And in order to ensure the reliability of the message, we blindly guess that the TCP protocol is used for communication between them.

Communication between two ends of chat software

Communication between two ends of chat software

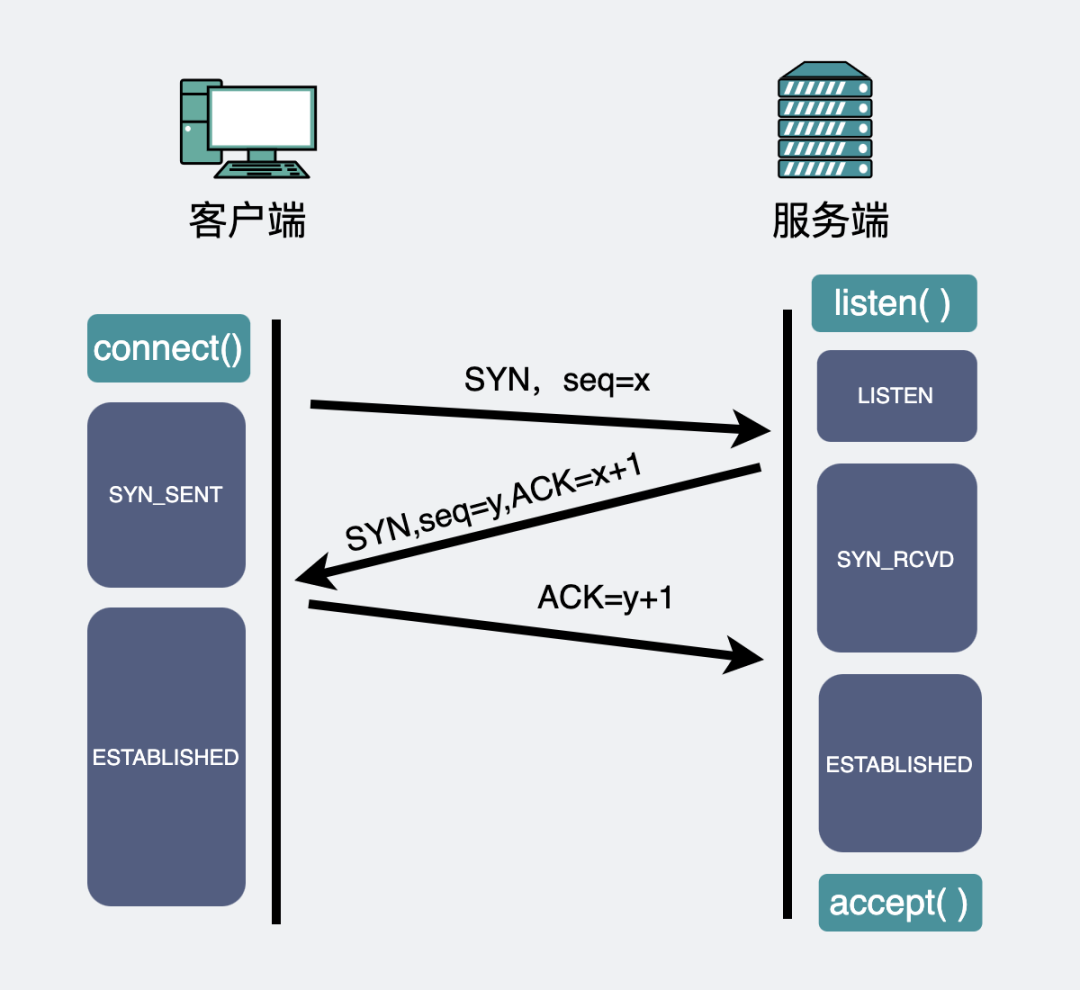

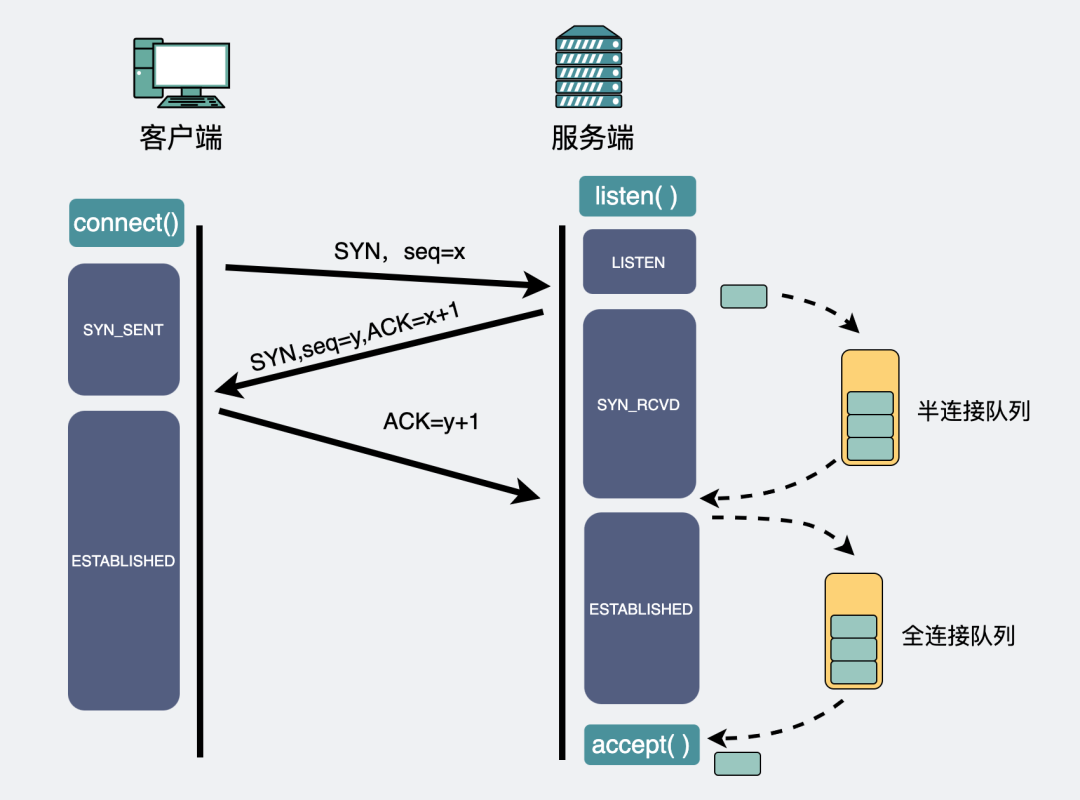

In order to send data packets, the two ends will first establish a TCP connection through a three-way handshake.

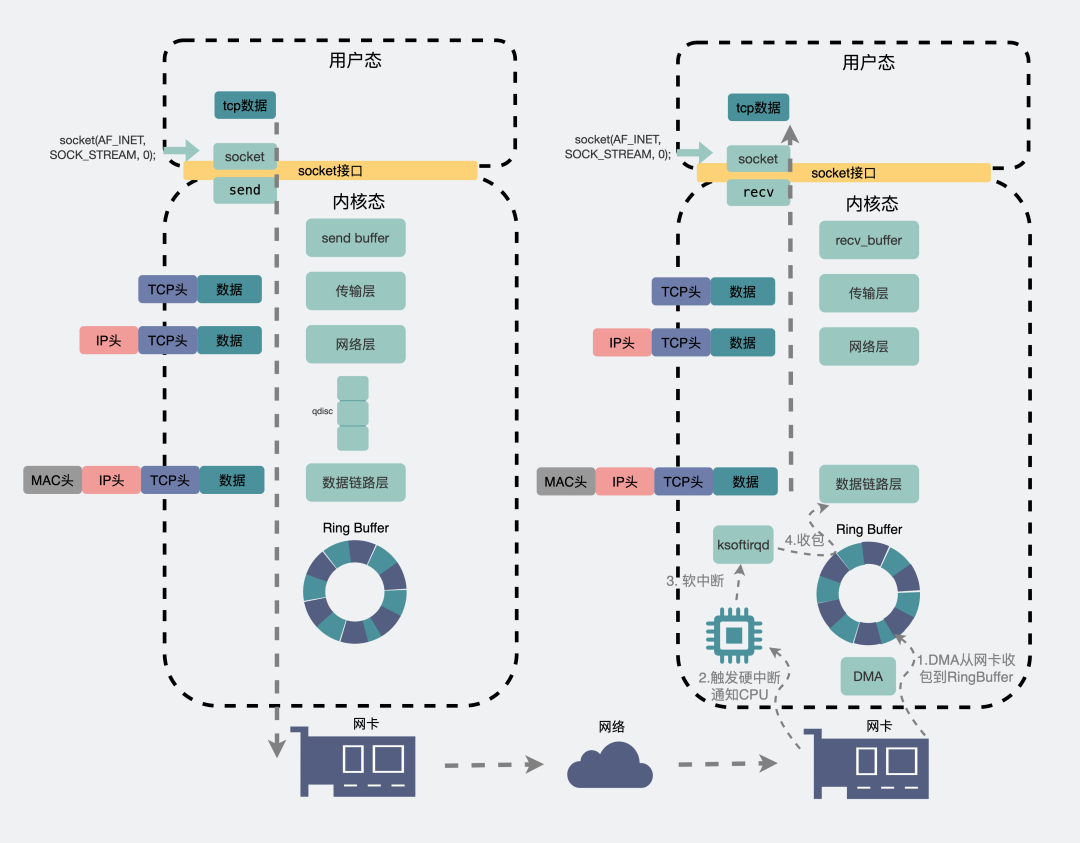

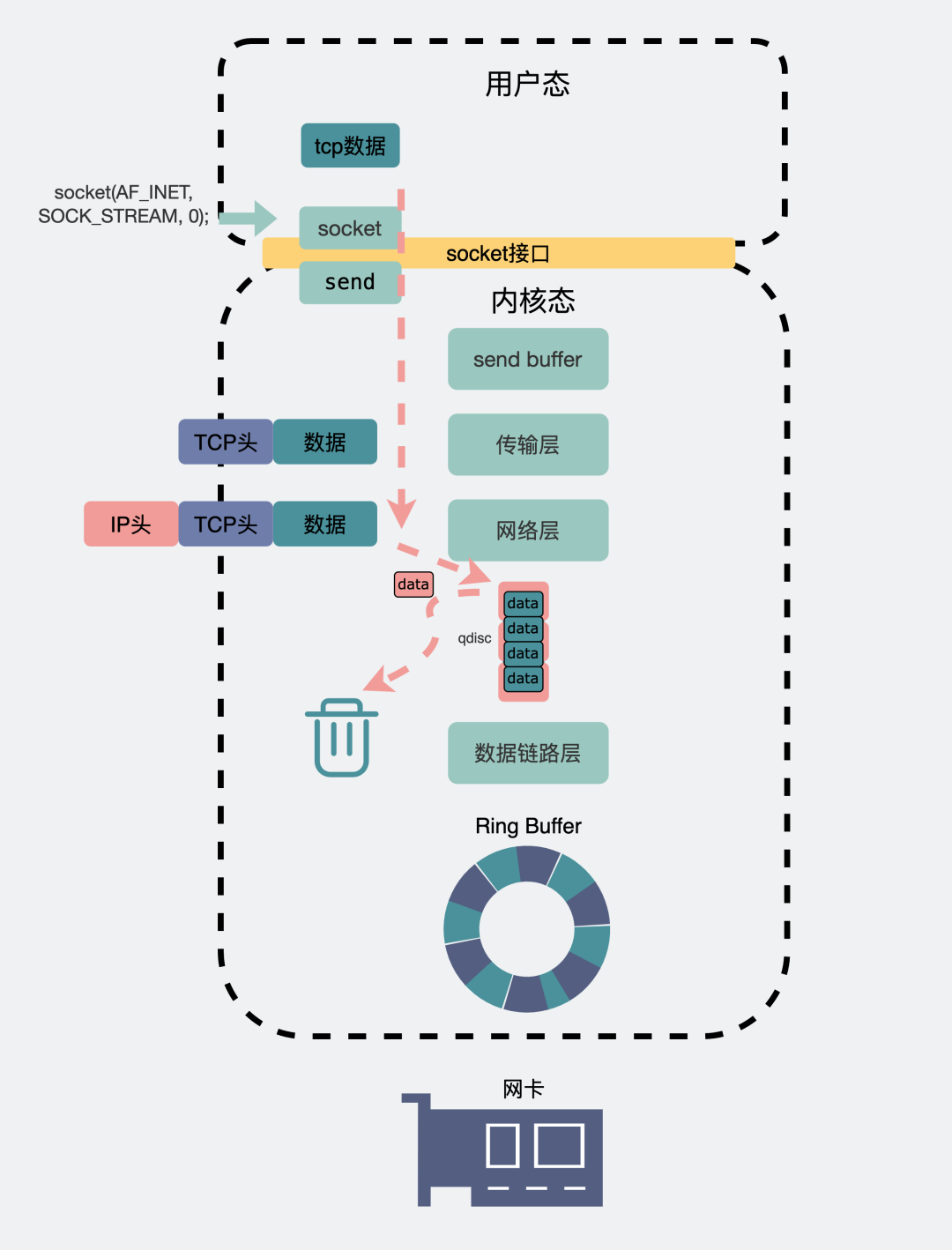

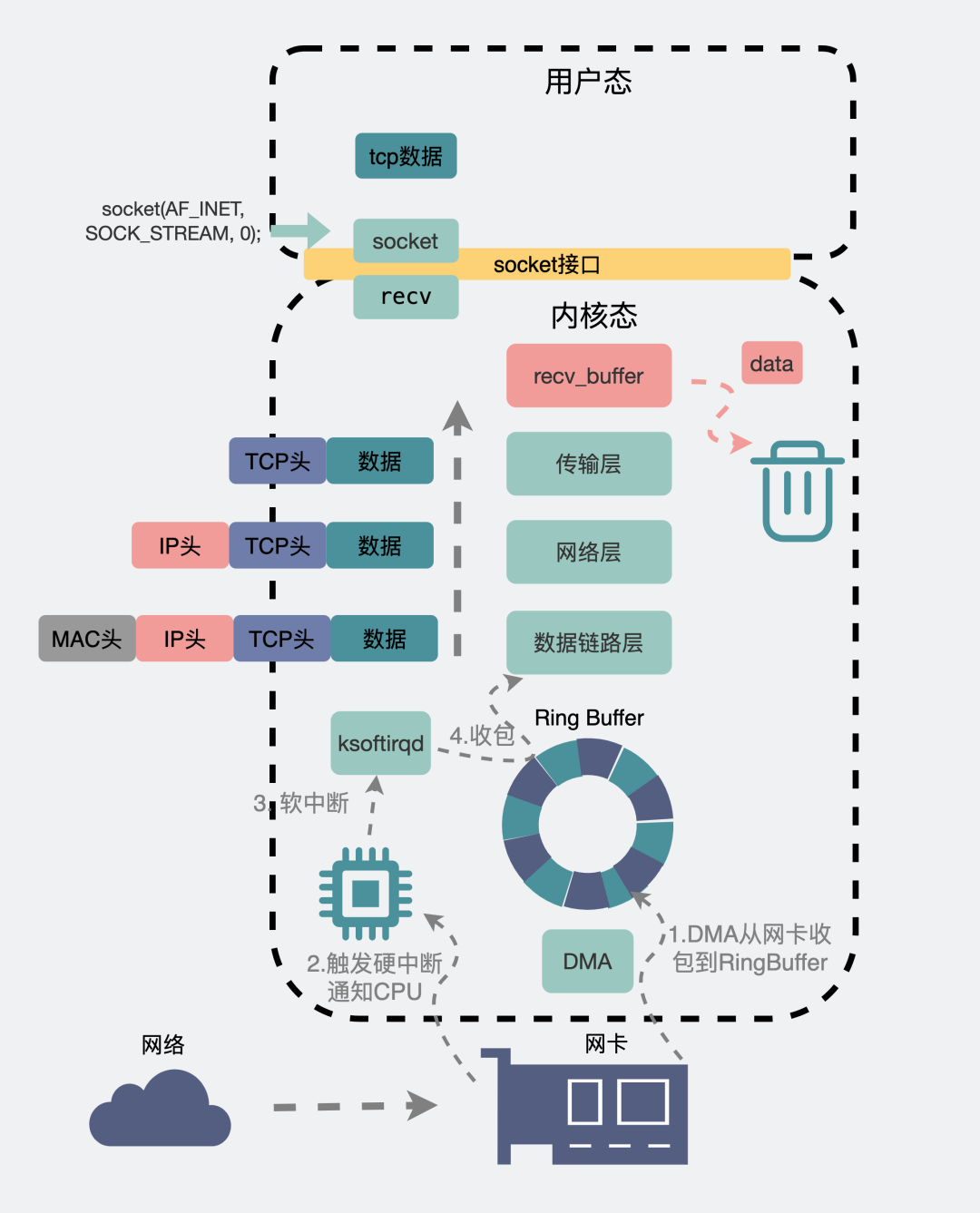

A data packet is sent from the chat box, and the message will be copied from the user space where the chat software is located to the send buffer in the kernel space, and the data packet will enter the data link layer along the transport layer and the network layer. , where the data packet will go through flow control (qdisc), and then sent to the physical layer network card through RingBuffer. In this way, the data is sent along the network card to the complicated network world. Here, the header data will go through multiple jumps between routers and switches, and finally arrive at the network card of the destination machine.

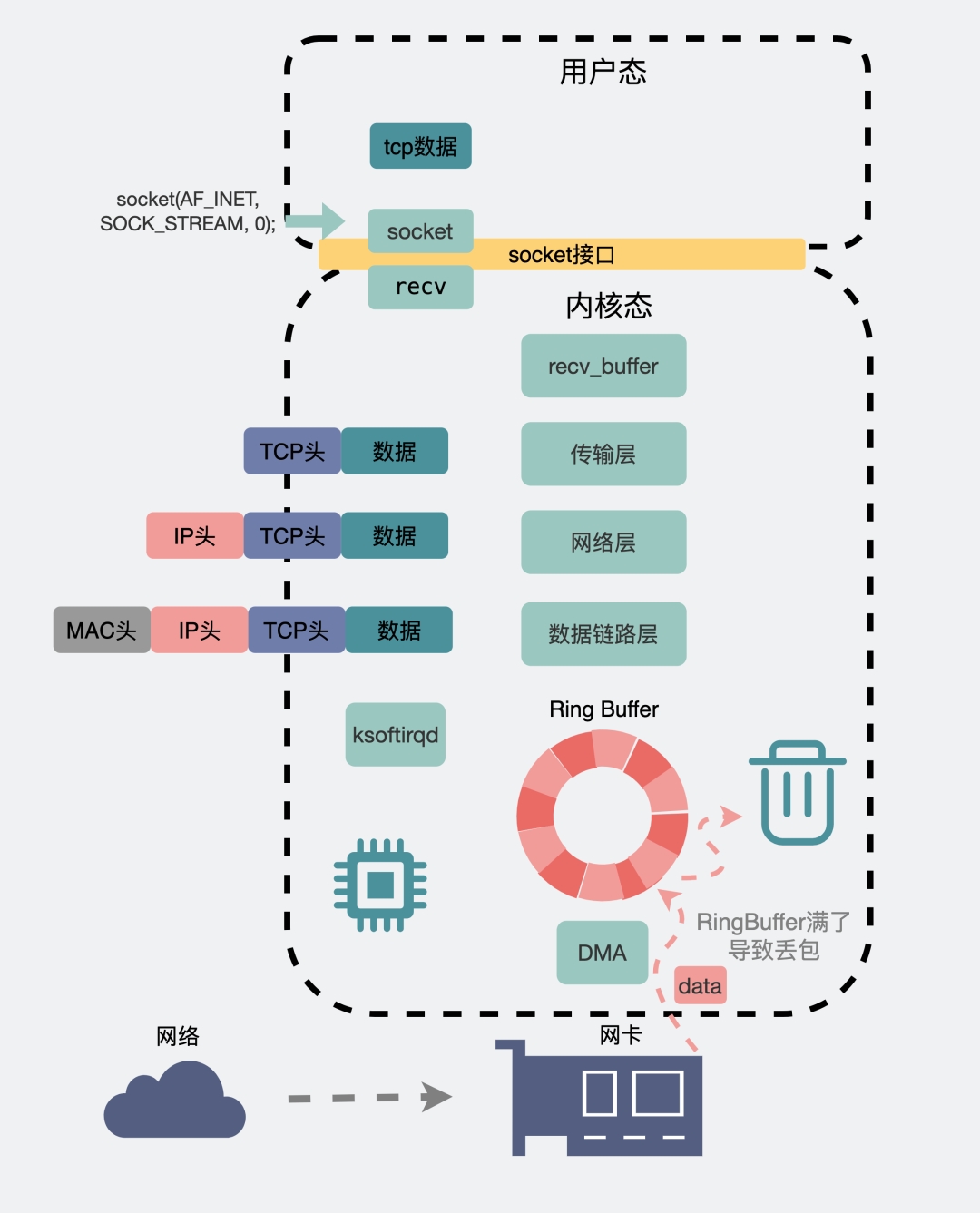

At this time, the network card of the destination machine will notify the DMA to put the data packet information into the RingBuffer, and then trigger a hard interrupt to the CPU. The CPU triggers a soft interrupt to let ksoftirqd go to the RingBuffer to receive the packet, so a data packet follows the physical layer and the data chain. The road layer, the network layer, the transport layer, and finally copied from the kernel space to the chat software in the user space.

Panorama of network sending and receiving packets

Panorama of network sending and receiving packets

After drawing such a big picture and explaining it in only 200 words, I am somewhat heartbroken.

Here, aside from some details, you probably know the macro process of a data packet from sending to receiving.

As you can see, it is full of dense nouns.

When the entire link is down, packet loss may occur in many places.

But in order not to let everyone stay in a squatting position for too long and affect their health, I will only focus on a few common scenarios that are prone to packet loss.

Packet loss when establishing connection

The TCP protocol establishes a connection through a three-way handshake . Probably as long as below.

TCP three-way handshake

TCP three-way handshake

On the server side, after the first handshake, a semi-connection will be established first, and then the second handshake will be issued. At this time, there needs to be a place to temporarily store these semi-joins. This place is called a semi-join queue.

If the third handshake comes after that, the semi-connection will be upgraded to a full-connection, and then temporarily stored in another place called the full-connection queue, waiting for the program to execute the accept()method to take it away and use it.

Semi-connected queues and fully-connected queues

Semi-connected queues and fully-connected queues

The queue has a length, and if there is a length, it may be full. If they are full, the new packets will be discarded.

You can check whether there is such a packet loss behavior in the following way.

# 全连接队列溢出次数

# netstat -s | grep overflowed

4343 times the listen queue of a socket overflowed

# 半连接队列溢出次数

# netstat -s | grep -i "SYNs to LISTEN sockets dropped"

109 times the listen queue of a socket overflowed- 1.

- 2.

- 3.

- 4.

- 5.

- 6.

From the phenomenon, the connection establishment failed.

flow control packet loss

There are so many softwares that can send network data packets at the application layer. If all the data is rushed into the network card without control, the network card will be overwhelmed. What should I do? Let the data be processed in a queue according to certain rules, which is the so-called qdisc (Queueing Disciplines, queuing rules), which is also the flow control mechanism we often say.

To queue, there must be a queue first, and the queue has a length.

We can see through the following ifconfig command that the number 1000 after the txqueuelen involved is actually the length of the flow control queue.

When the data is sent too fast and the flow control queue length txqueuelen is not large enough, packet loss is prone to occur.

qdisc packet loss

You can use the following ifconfig command to view the dropped field under TX. When it is greater than 0, it is possible that flow control packet loss has occurred.

# ifconfig eth0

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.21.66.69 netmask 255.255.240.0 broadcast 172.21.79.255

inet6 fe80::216:3eff:fe25:269f prefixlen 64 scopeid 0x20<link>

ether 00:16:3e:25:26:9f txqueuelen 1000 (Ethernet)

RX packets 6962682 bytes 1119047079 (1.0 GiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 9688919 bytes 2072511384 (1.9 GiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0- 1.

- 2.

- 3.

- 4.

- 5.

- 6.

- 7.

- 8.

- 9.

When this happens, we can try to modify the length of the flow control queue. For example, the flow control queue length of the eth0 network card is increased from 1000 to 1500 as follows.

# ifconfig eth0 txqueuelen 1500- 1.

NIC packet loss

It is also common for the network card and its driver to cause packet loss. There are many reasons, such as poor network cable quality and poor contact. In addition, let's talk about a few common scenarios.

RingBuffer is too small, resulting in packet loss

As mentioned above, when receiving data, it will temporarily store the data in the RingBuffer receive buffer, and then wait for the kernel to trigger a soft interrupt to slowly collect it. If the buffer is too small, and the data sent at this time is too fast, overflow may occur, and packet loss will also occur at this time.

RingBuffer is full, causing packet loss

We can use the following command to see if such a thing has happened.

# ifconfig

eth0: RX errors 0 dropped 0 overruns 0 frame 0- 1.

- 2.

Check out the overruns metric above, which records the number of overflows due to insufficient RingBuffer length.

Of course, it can also be viewed with the ethtool command.

# ethtool -S eth0|grep rx_queue_0_drops- 1.

But it should be noted here that because a network card can have multiple RingBuffers, the 0 in the above rx_queue_0_drops represents the number of dropped packets of the 0th RingBuffer. For multi-queue network cards, this 0 can also be changed to other numbers. But my family conditions don't allow me to see the packet loss of other queues, so the above command is enough for me.

When this type of packet loss is found, you can run the following command to view the configuration of the current network card.

#ethtool -g eth0

Ring parameters for eth0:

Pre-set maximums:

RX: 4096

RX Mini: 0

RX Jumbo: 0

TX: 4096

Current hardware settings:

RX: 1024

RX Mini: 0

RX Jumbo: 0

TX: 1024- 1.

- 2.

- 3.

- 4.

- 5.

- 6.

- 7.

- 8.

- 9.

- 10.

- 11.

- 12.

The above output means that RingBuffer supports a maximum length of 4096, but now only 1024 is actually used.

To modify this length, execute ethtool -G eth1 rx 4096 tx 4096 to change the length of both sending and receiving RingBuffer to 4096.

After the RingBuffer is increased, the packet loss caused by the small capacity can be reduced.

Insufficient network card performance

As hardware, the network card has an upper limit on the transmission speed. Packet loss occurs when the network transmission speed is too large and reaches the upper limit of the network card. This situation is generally common in stress testing scenarios.

We can add the network card name through ethtool to get the maximum speed supported by the current network card.

# ethtool eth0

Settings for eth0:

Speed: 10000Mb/s- 1.

- 2.

- 3.

It can be seen that the maximum transmission speed that the network card I use here can support is speed=1000Mb/s.

It is also commonly known as Gigabit network card, but note that the unit here is Mb, and b here refers to bit, not Byte. 1Byte=8bit. So 10000Mb/s has to be divided by 8, that is, the theoretical maximum transmission speed of the network card is 1000/8 = 125MB/s.

We can analyze the sending and receiving of data packets from the network interface level through the sar command.

# sar -n DEV 1

Linux 3.10.0-1127.19.1.el7.x86_64 2022年07月27日 _x86_64_ (1 CPU)

08时35分39秒 IFACE rxpck/s txpck/s rxkB/s txkB/s rxcmp/s txcmp/s rxmcst/s

08时35分40秒 eth0 6.06 4.04 0.35 121682.33 0.00 0.00 0.00- 1.

- 2.

- 3.

- 4.

- 5.

Among them, txkB/s refers to the total number of bytes (bytes) currently sent per second, and rxkB/s refers to the total number of bytes (bytes) received per second.

When the combined value of the two is about 12~13w bytes, it corresponds to a transmission speed of about 125MB/s. At this point, the performance limit of the network card is reached, and packet loss will begin.

When you encounter this problem, first check whether your service really has such a large amount of real traffic. If so, you can consider splitting the service, or reluctantly charge up the money to upgrade the configuration.

Receive buffer packet loss

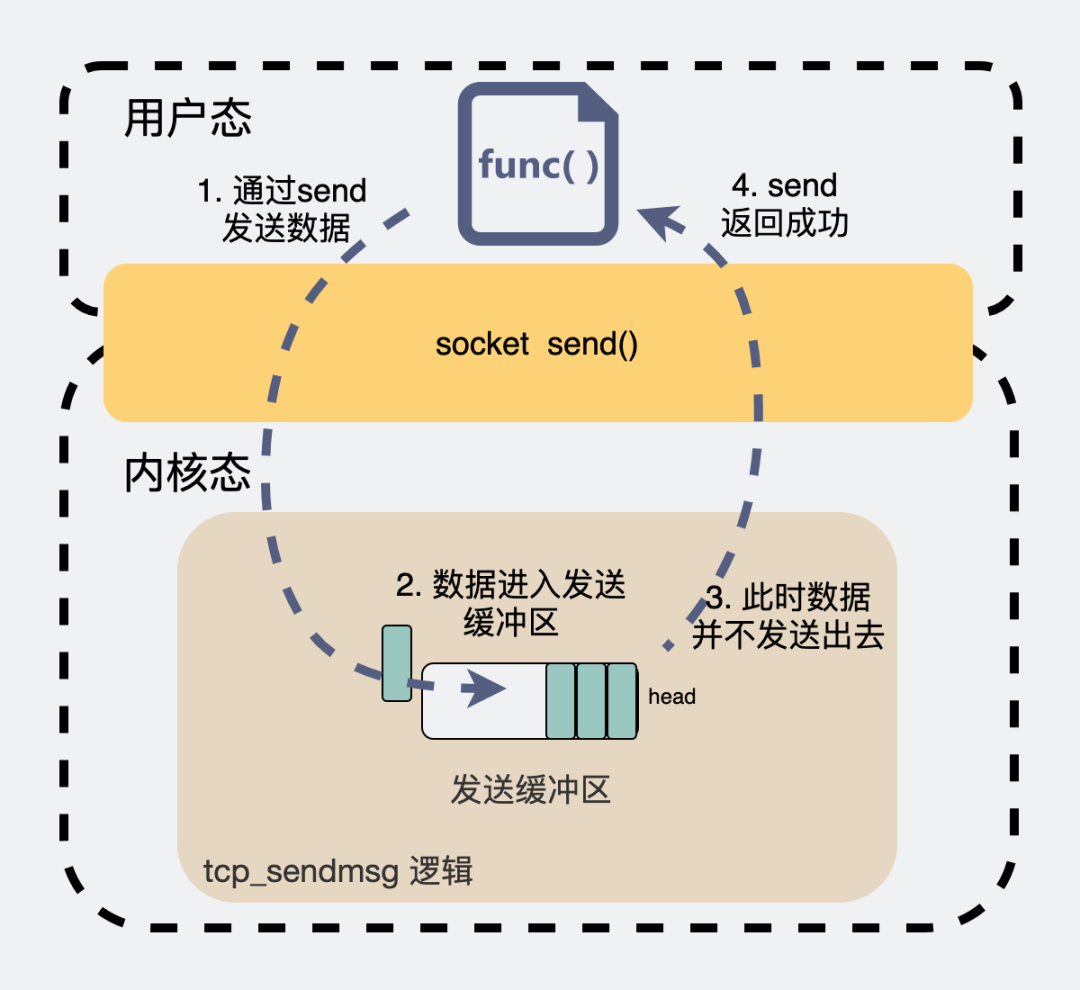

When we generally use TCP socket for network programming, the kernel will allocate a send buffer and a receive buffer.

When we want to send a data packet, we will execute send(msg) in the code. At this time, the data packet does not fly directly through the network card in a shuttle. Instead, copy the data to the kernel send buffer and return it. As for when to send data and how much data to send, the follow-up decision is made by the kernel itself.

tcp_sendmsg logic

The receive buffer works similarly. The data packets received from the external network are temporarily stored in this place, and then wait for the user space application to take the data packets away.

These two buffers are limited in size, which can be viewed with the following commands.

# 查看接收缓冲区

# sysctl net.ipv4.tcp_rmem

net.ipv4.tcp_rmem = 4096 87380 6291456

# 查看发送缓冲区

# sysctl net.ipv4.tcp_wmem

net.ipv4.tcp_wmem = 4096 16384 4194304- 1.

- 2.

- 3.

- 4.

- 5.

- 6.

Whether it is the receive buffer or the send buffer, you can see three values, which correspond to the minimum, default and maximum values of the buffer (min, default, max). The buffer is dynamically adjusted between min and max.

So the question is, what happens if the buffer is set too small?

For the send buffer, when executing send, if it is a blocking call, it will wait until there is space in the buffer to send data.

send blocks

If it is a non-blocking call, it will immediately return an EAGAIN error message, which means Try again. Let the application try again next time. Packet loss generally does not occur in this case.

send non-blocking

When the receiving buffer is full, things are different. Its TCP receiving window will become 0, which is the so-called zero window, and it will tell the sender, "Ball ball," through win=0 in the data packet. Can't stand it anymore, don't post it." In this case, the sender should stop sending messages, but if there is still data sent at this time, packet loss will occur.

recv_buffer packet loss

We can check whether this packet loss has occurred through TCPRcvQDrop in the following command.

cat /proc/net/netstat

TcpExt: SyncookiesSent TCPRcvQDrop SyncookiesFailed

TcpExt: 0 157 60116- 1.

- 2.

- 3.

But to say a sad thing, we generally don't see this TCPRcvQDrop, because this is the RBI introduced in version 5.9, and our servers generally use versions around 2.x~3.x. You can check what version of the linux kernel you are using with the command below.

# cat /proc/version

Linux version 3.10.0-1127.19.1.el7.x86_64- 1.

- 2.

Network packet loss between two ends

As mentioned above, the internal network of the machines at both ends loses packets. In addition, the long link between the two ends belongs to the external network. There are various routers, switches, and optical cables in the middle. Packet loss It also happens very often.

These packet loss behaviors occur on certain machines in the intermediate link, and we certainly do not have permission to log in to these machines. But we can observe the connectivity of the entire link through some commands.

ping command to check packet loss

For example, we know that the domain name of the destination is baidu.com. I want to know if there is any packet loss between your machine and the baidu server. You can use the ping command.

ping to check packet loss

ping to check packet loss

There is 100% packet loss in the penultimate line, which means the packet loss rate is 100%.

But in this way, you can only know whether there is any packet loss between your machine and the destination machine.

Then if you want to know the link between you and the destination machine, which node has lost packets, is there any way?

Have.

mtr command

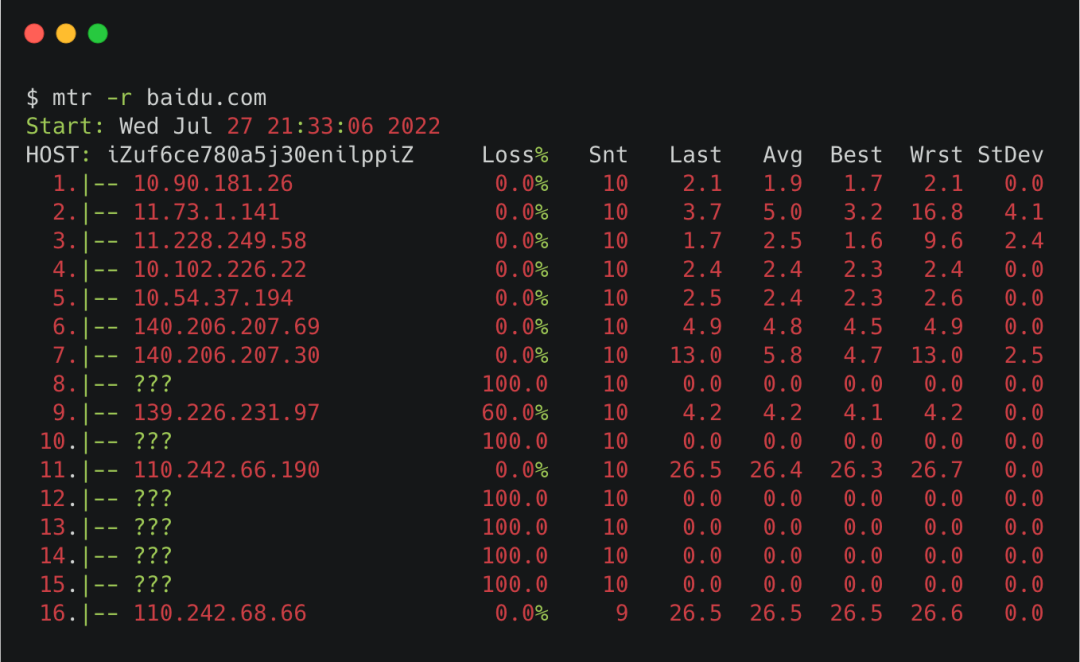

The mtr command can view the packet loss of each node between your machine and the destination machine.

Execute the command like below.

mtr_icmp

Where -r refers to report, print the results in the form of a report.

You can see the column of Host, which shows the machines at each hop in the middle of the link, and the column of Loss refers to the packet loss rate corresponding to this hop.

It should be noted that some of the hosts in the middle are ???, that is because mtr uses the ICMP package by default, and some nodes restrict the ICMP package, which can not be displayed normally.

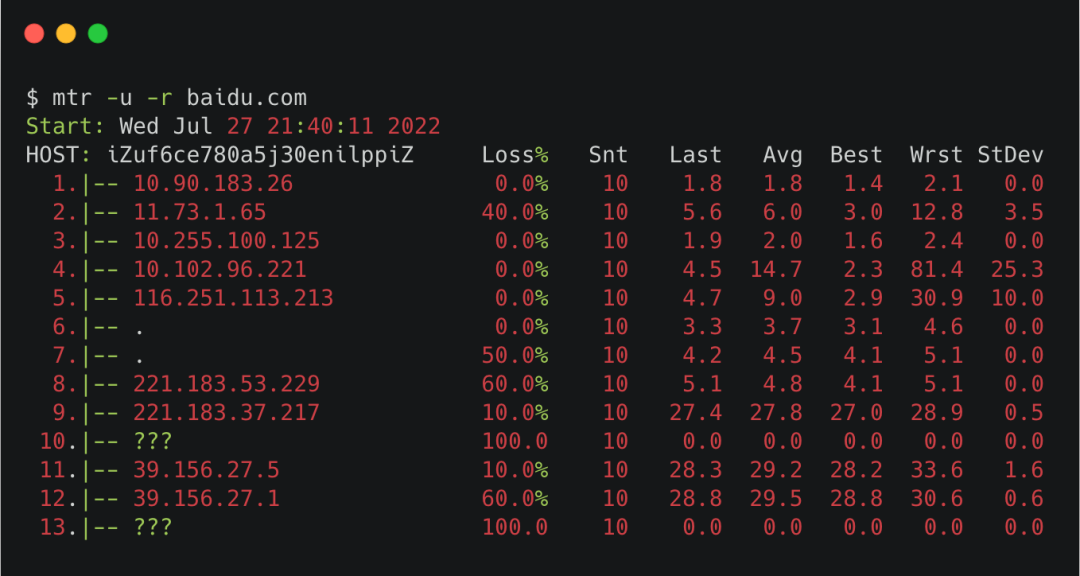

We can add a -u to the mtr command, that is, using the udp package, we can see the IP corresponding to some ???.

mtr-udp

Putting the results of the ICMP packet and the UDP packet together, it is a relatively complete link diagram.

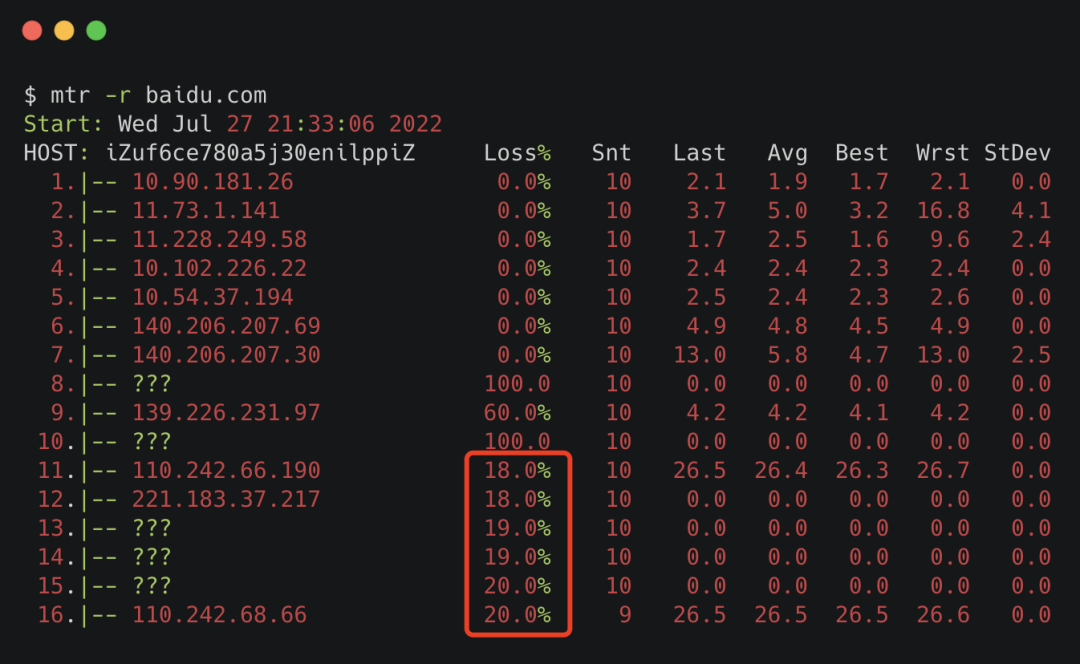

There is also a small detail. In the Loss column, we pay attention to the last line in the icmp scenario. If it is 0%, it doesn’t matter whether the previous loss is 100% or 80%. Those are false reports caused by node restrictions.

But if the last line is 20%, and the next few lines are about 20%, it means that the packet loss occurs from the closest line. If this is the case for a long time, it is very likely that there is a problem. If it is the company's intranet, you can take this clue to find the corresponding network colleagues. If it is an external network, then be patient and wait, other people's development will be more anxious than yours.

What should I do if a packet is lost?

having said so much. Just wanted to tell you that packet loss is very common and almost inevitable.

But the question is, what should I do if the packet is lost?

This is easy to handle, using the TCP protocol for transmission.

what is TCP

what is TCP

Both ends of the TCP connection are established. After sending the data, the sender will wait for the receiver to reply with an ack packet. The purpose of the ack packet is to tell the other party that it has indeed received the data, but if the intermediate link is lost, the sender will If the confirmation ack is not received for a long time, it will retransmit. This ensures that each packet actually reaches the receiver.

Suppose the network is disconnected now, and we still use chat software to send messages. The chat software will use TCP to continuously try to retransmit the data. If the network recovers during the retransmission, the data can be sent normally. But if you try multiple times and fail until the timeout, you will get a red exclamation mark.

Now the problem comes again.

Suppose a green skin chat software uses the TCP protocol.

For the girl mentioned at the beginning of the article, why does her boyfriend still lose the packet when he replies to her message? After all, if the packet is lost, it will be retried, and if the retry fails, a red exclamation mark will appear.

Since then, the problem has become, using the TCP protocol, will there be no packet loss?

Will the TCP protocol be used without packet loss?

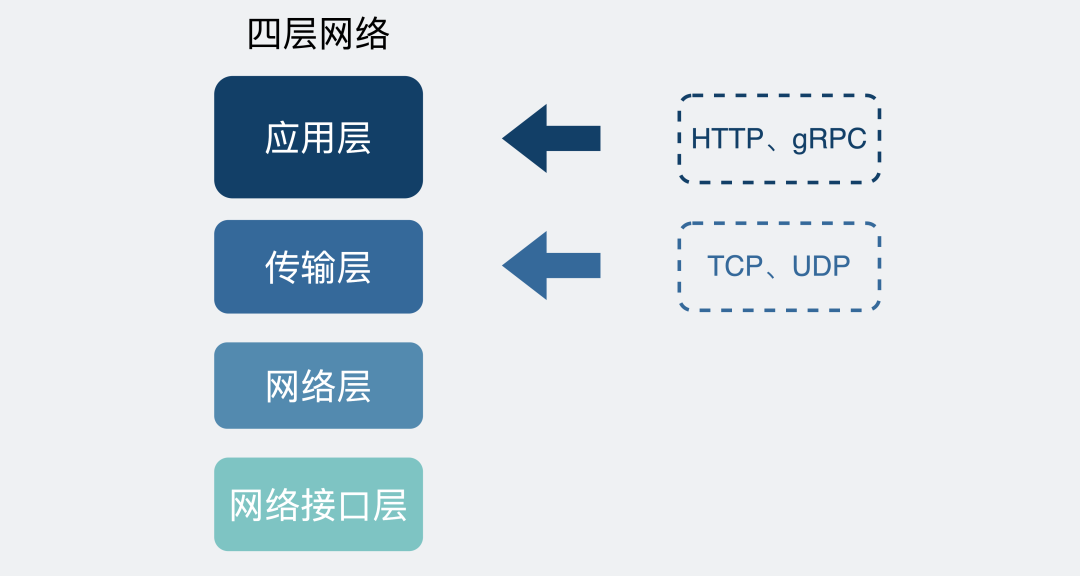

We know that TCP is located at the transport layer, and there are various application layer protocols on top of it, such as common HTTP or various RPC protocols.

Layer 4 Network Protocol

Layer 4 Network Protocol

The reliability guaranteed by TCP is the reliability of the transport layer. That is to say, TCP only guarantees that data is reliably sent from the transport layer of machine A to the transport layer of machine B.

As for whether the data can be guaranteed to the application layer after it reaches the transport layer of the receiving end, TCP does not care.

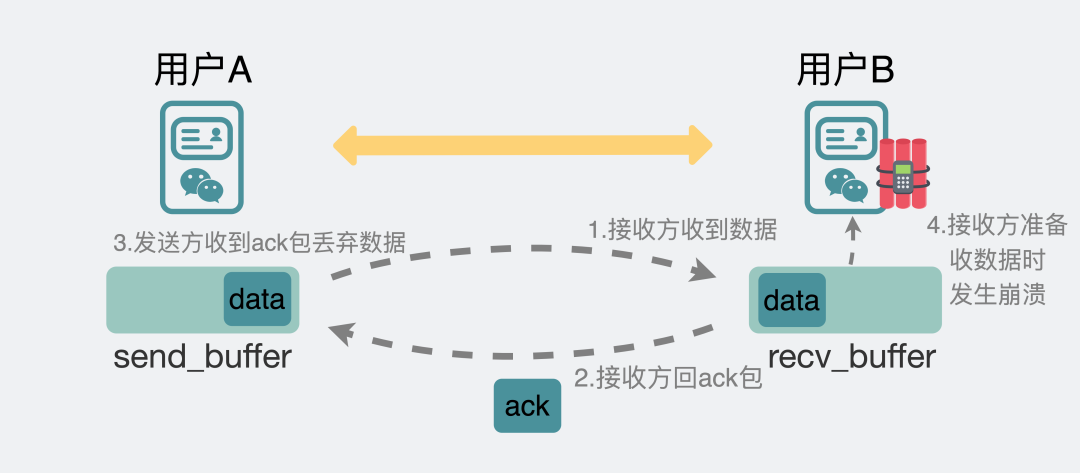

Suppose now, we enter a message, send it from the chat box, go to the send buffer of the transport layer TCP protocol, no matter whether there is any packet loss in the middle, and finally ensure that it is sent to the other party's transport layer TCP receive buffer through retransmission. At this time The receiver replies with an ack, and the sender throws away the message in its sending buffer after receiving the ack. At this point, the task of TCP is over.

The TCP task is over, but the chat software task is not over.

The chat software also needs to read the data from the TCP receiving buffer. If the mobile phone crashes due to insufficient memory or various other reasons at the moment of reading.

The sender thinks that the message it sent has been sent to the other party, but the receiver does not receive the message.

So, the news is lost.

Packet loss occurs when using TCP protocol

Packet loss occurs when using TCP protocol

Although the probability is small, it just happened.

Reasonable and logical.

So from here, I have come to the conclusion that my readers have already replied to this girl's message, but the girl couldn't receive it because the packet was lost, and the reason for the lost packet was that the girl's mobile chat software was in A flashback occurred the moment the message was received.

to here. The girl knew that she had wrongly blamed her boyfriend, and cried and said that she must ask her boyfriend to buy her a new iPhone that will not flash back.

Forehead. Brothers think I'm doing the right thing, please deduct "positive energy" in the comment area.

How to solve this kind of packet loss problem?

The story has come to an end here. Apart from being moved, let's talk about some heartfelt words.

In fact, what I said above is true, and none of it is false.

But a green skin chat software is so mature, how could it not have considered this.

You should remember that we mentioned at the beginning of this article that for simplicity, we omitted the server side, and changed from three-terminal communication to two-terminal communication, so there is this packet loss problem.

Now let's add the server back in.

Chat software three-terminal communication

Have you noticed that sometimes we chat a lot on the mobile phone, and then log in to the computer version, it can synchronize the recent chat records to the computer version. That is to say, the server may record what data we have sent recently. Assuming that each message has an id, the server and the chat software compare the id of the latest message every time to know whether the messages at both ends are consistent. the same account.

For the sender, as long as the account is reconciled with the content of the server regularly, it will know which message has not been sent successfully, and it will be fine to re-send it directly.

If the receiver's chat software crashes, after restarting, it communicates with the server a little to know which piece of data is missing, and the synchronization is complete, so there is no packet loss mentioned above.

It can be seen that TCP only guarantees the message reliability of the transport layer, and does not guarantee the message reliability of the application layer. If we also want to ensure the message reliability of the application layer, we need the application layer to implement the logic to ensure it.

So here comes the question. When the two ends communicate with each other, the accounts can be reconciled. Why should a third-end server be introduced?

There are three main reasons.

- First, if it is communication between two ends, you have 1000 friends in the chat software, and you have to establish 1000 connections. But if the server is introduced, you only need to establish a connection with the server. The less resources the chat software consumes, the more power the phone will save.

- Second, it is a security issue. If you are still communicating at both ends, if anyone asks you to reconcile the accounts, you will synchronize the chat records, which is not appropriate. If the other party has ulterior motives, the information will be leaked. Introducing a third-party server makes it easy to do various authentication checks.

- The third is the software version problem. After the software is installed on the user's mobile phone, it is up to the user to decide if the software is not updated. If there is still communication between two ends, and the software version span of both ends is too large, it is easy to cause various compatibility problems, but the introduction of a third-end server can force some versions to be upgraded, otherwise the software cannot be used. But for most compatibility issues, it is good to add compatibility logic to the server, and there is no need to force users to update the software.

So everyone should understand that when you see this, I removed the server, not just for simplicity.

Summarize

- From the sender to the receiver, the link is very long, and packet loss may occur anywhere. It can almost be said that packet loss is inevitable .

- Usually, there is no need to pay attention to packet loss. Most of the time, TCP's retransmission mechanism ensures message reliability.

- When you find that the service is abnormal, for example, the interface delay is very high and always fails, you can use the ping or mtr command to check whether the intermediate link has lost packets.

- TCP only guarantees the message reliability of the transport layer, and does not guarantee the message reliability of the application layer. If we also want to ensure the message reliability of the application layer, we need the application layer to implement the logic to ensure it.

Finally, let me leave you a question, how does the mtr command know the IP address of each hop?