CLIP-BEVFormer: Explicitly supervise the BEVFormer structure to improve long-tail detection performance

Written above & the author’s personal understanding

At present, in the entire autonomous driving system, the perception module plays a vital role. Only after the autonomous vehicle driving on the road obtains accurate sensing results through the perception module can the downstream regulation and control of the autonomous driving system be achieved. The module makes timely and correct judgments and behavioral decisions. Currently, cars with autonomous driving functions are usually equipped with a variety of data information sensors including surround-view camera sensors, lidar sensors, and millimeter-wave radar sensors to collect information in different modalities to achieve accurate perception tasks.

Because the BEV perception algorithm based on pure vision requires lower hardware and deployment costs, and the BEV spatial perception results it outputs can be easily used for downstream planning and control tasks, it has received widespread attention from industry and academia. In recent years, many visual perception algorithms based on BEV space have been proposed and achieved excellent perception performance on public data sets.

At present, perception algorithms based on BEV space can be roughly divided into two types of algorithm models based on the way to construct BEV features:

- One type is the forward BEV feature construction method represented by the LSS algorithm. This type of perception algorithm model first uses the depth estimation network in the perception model to predict the semantic feature information and discrete depth probability distribution of each pixel of the feature map. Then the obtained semantic feature information and discrete depth probability are used to construct semantic frustum features using outer product operations, and BEV pooling and other methods are used to finally complete the construction process of BEV spatial features.

- The other type is the reverse BEV feature construction method represented by the BEVFormer algorithm. This type of perception algorithm model first explicitly generates 3D voxel coordinate points in the perceived BEV space, and then uses the internal and external parameters of the camera to convert the 3D voxels into The coordinate points are projected back to the image coordinate system, and the pixel features of the corresponding feature positions are extracted and aggregated to construct the BEV features in the BEV space.

Although both types of algorithms can more accurately generate features in the BEV space and complete the final 3D perception results, in the current 3D target perception algorithms based on the BEV space, such as the BEVFormer algorithm, there are the following two problems:

- Question 1: Since the overall framework of the BEVFormer perception algorithm model adopts the Encoder-Decoder network structure, the main idea is to use the Encoder module to obtain the features in the BEV space, and then use the Decoder module to predict the final perception result, and pass the output perception The process of calculating the loss between the results and the ground truth target to achieve the BEV spatial features predicted by the model. However, the parameter update method of this network model will rely too much on the perceptual performance of the Decoder module, which may lead to the problem that the BEV features output by the model are not aligned with the true value BEV features, thus further restricting the final performance of the perceptual model.

- Question 2: Since the Decoder module of the BEVFormer perception algorithm model still uses the steps of the self-attention module -> cross-attention module -> feedforward neural network in the Transformer to complete the construction of the Query feature and output the final detection result, the whole process is still A black box model that lacks good interpretability. At the same time, there is also great uncertainty in the one-to-one matching process between Object Query and the true value target during the model training process.

Therefore, in view of the two problems mentioned above in the BEVFormer perception algorithm model, we improved on the basis of the BEVFormer algorithm model and proposed a 3D detection algorithm model CLIP-BEVFormer in the BEV scene based on surround images. By using contrastive learning way to enhance the model's ability to construct BEV features, and achieve SOTA perceptual performance on the nuScenes data set.

Article link: https://arxiv.org/pdf/2403.08919.pdf

Overall architecture & details of the network model

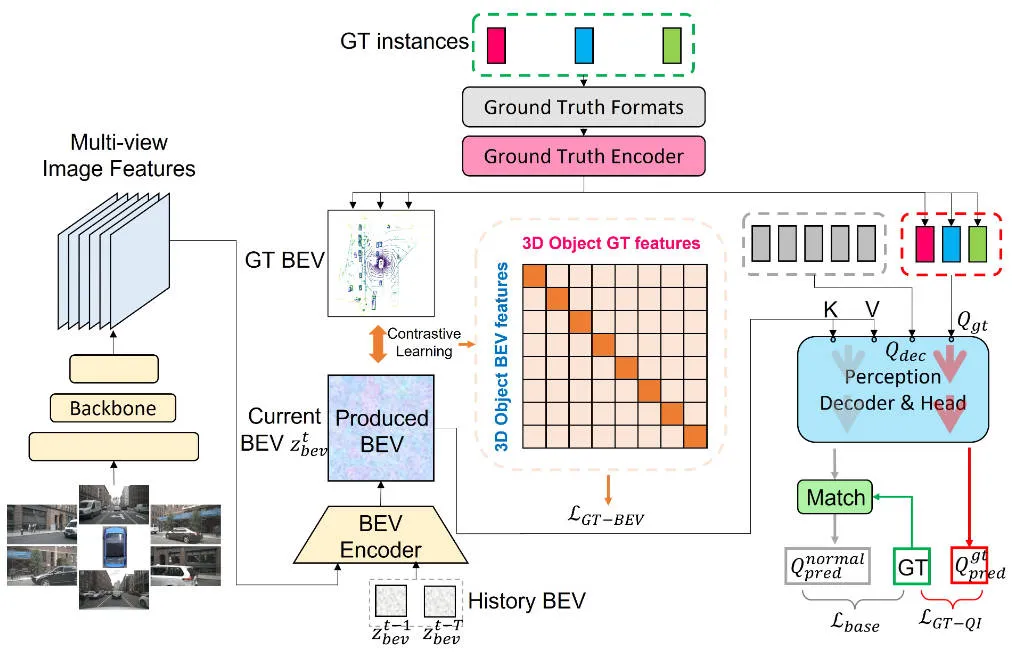

Before introducing the details of the specific CLIP-BEVFormer perception algorithm model proposed in this article, the following figure shows the overall network structure of the CLIP-BEVFormer algorithm we proposed.

The overall flow chart of the CLIP-BEVFormer perception algorithm model proposed in this article

The overall flow chart of the CLIP-BEVFormer perception algorithm model proposed in this article

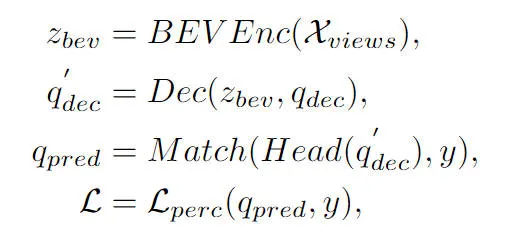

It can be seen from the overall flow chart of the algorithm that the CLIP-BEVFormer algorithm model proposed in this article is improved on the basis of the BEVFormer algorithm model. Here is a brief review of the implementation process of the BEVFormer perception algorithm model. First, the BEVFormer algorithm model inputs the surround image data collected by the camera sensor, and uses the 2D image feature extraction network to extract the multi-scale semantic feature information of the input surround image. Secondly, the Encoder module containing temporal self-attention and spatial cross-attention is used to complete the conversion process of 2D image features to BEV spatial features. Then, a set of Object Query is generated in the form of normal distribution in the 3D perception space and sent to the Decoder module to complete the interactive utilization of spatial features with the BEV space features output by the Encoder module. Finally, the feedforward neural network is used to predict the semantic features queried by Object Query, and the final classification and regression results of the network model are output. At the same time, during the training process of the BEVFormer algorithm model, the one-to-one Hungarian matching strategy is used to complete the distribution process of positive and negative samples, and classification and regression losses are used to complete the update process of the overall network model parameters. The overall detection process of the BEVFormer algorithm model can be expressed by the following mathematical formula:

Among them, in the formula represents the Encoder feature extraction module in the BEVFormer algorithm, represents the Decoder decoding module in the BEVFormer algorithm, represents the true value target label in the data set, and represents the 3D perception result output by the current BEVFormer algorithm model.

Generation of true value BEV

As mentioned above, the vast majority of existing 3D target detection algorithms based on BEV space do not explicitly supervise the generated BEV space features, resulting in BEV features generated by the model that may be inconsistent with the real BEV features. The problem is that this distribution difference of BEV spatial features will restrict the final perceptual performance of the model. Based on this consideration, we proposed the Ground Truth BEV module. Our core idea in designing this module is to enable the BEV features generated by the model to be aligned with the current true value BEV features, thereby improving the performance of the model.

Specifically, as shown in the overall network framework diagram, we use a ground truth encoder () to encode the category label and spatial bounding box position information of any ground truth instance on the BEV feature map. This process can It can be expressed as the following formula:

The feature dimension in the formula has the same size as the generated BEV feature map, which represents the encoded feature information of a true value target. During the encoding process, we adopted two forms, one is a large language model (LLM), and the other is a multi-layer perceptron (MLP). Through experimental results, we found that the two methods basically achieved the same performance.

In addition, in order to further enhance the boundary information of the true target on the BEV feature map, we crop the true target according to its spatial position on the BEV feature map, and use a pooling operation on the cropped features. To construct the corresponding feature information representation, the process can be expressed in the following form:

Finally, in order to achieve further alignment between the BEV features generated by the model and the true value BEV features, we used the contrastive learning method to optimize the element relationship and distance between the two types of BEV features. The optimization process can be expressed in the following form:

The and in the formula respectively represent the similarity matrix between the generated BEV features and the true value BEV features, represent the logical scale factor in contrastive learning, represent the multiplication operation between matrices, and represent the cross-entropy loss function. Through the above contrastive learning method, the method we propose can provide clearer feature guidance for the generated BEV features and improve the perceptual ability of the model.

Truth target query interaction

This part is also mentioned in the previous article. The Object Query in the BEVFormer perception algorithm model interacts with the generated BEV features through the Decoder module to obtain the corresponding target query features. However, the entire process is still a black box process and lacks a complete process. understand. To address this problem, we introduced the truth value query interaction module, which uses the truth value target to execute the BEV feature interaction of the Decoder module to stimulate the learning process of model parameters. Specifically, we introduce the truth target encoding information output by the truth encoder () module into Object Query to participate in the decoding process of the Decoder module. As normal Object Query, we participate in the same self-attention module, cross-attention module and The feedforward neural network outputs the final perception result. However, it should be noted that during the decoding process, all Object Query uses parallel computing to prevent the leakage of true value target information. The entire truth value target query interaction process can be abstractly expressed in the following form:

Among them, in the formula represents the initialized Object Query, and represents the output result of the true value Object Query through the Decoder module and the sensing detection head respectively. By introducing the interaction process of the true value target in the model training process, the true value target query interaction module we proposed can realize the interaction between the true value target query and the true value BEV feature, thereby assisting the parameter update process of the model Decoder module.

Experimental results & evaluation indicators

Quantitative analysis part

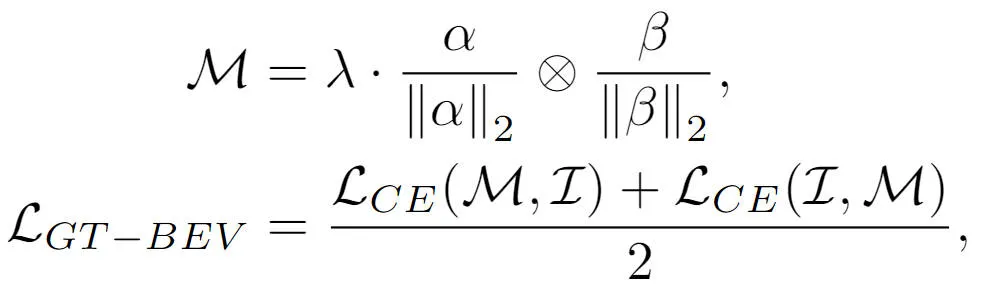

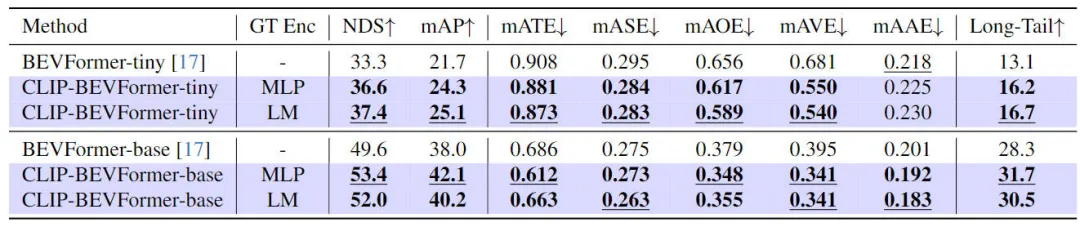

In order to verify the effectiveness of the CLIP-BEVFormer algorithm model we proposed, we conducted relevant experiments on the nuScenes data set from the perspectives of 3D perception effects, long-tail distribution of target categories in the data set, and robustness. The following table is Comparison of the accuracy of our proposed algorithm model and other 3D perception algorithm models on the nuScenes dataset.

Comparative results between the method proposed in this article and other perception algorithm models

In this part of the experiment, we evaluated the perceptual performance under different model configurations. Specifically, we applied the CLIP-BEVFormer algorithm model to the tiny and base variants of BEVFormer. In addition, we also explored the impact of using pre-trained CLIP models or MLP layers as ground truth target encoders on model perceptual performance. It can be seen from the experimental results that whether it is the original tiny or base variant, after applying the CLIP-BEVFormer algorithm we proposed, the NDS and mAP indicators have stable performance improvements. In addition, through the experimental results, we can find that the algorithm model we proposed is not sensitive to whether the MLP layer or the language model is selected for the ground truth target encoder. This flexibility can make the CLIP-BEVFormer algorithm we proposed more efficient. Adaptable and easy to deploy on the vehicle. In summary, the performance indicators of various variants of our proposed algorithm model consistently indicate that the proposed CLIP-BEVFormer algorithm model has good perceptual robustness and can achieve excellent detection performance under different model complexity and parameter amounts. .

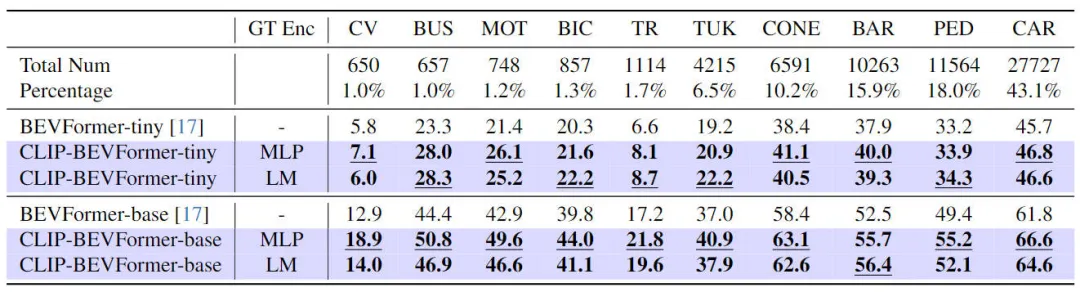

In addition to verifying the performance of our proposed CLIP-BEVFormer on 3D perception tasks, we also conducted long-tail distribution experiments to evaluate the robustness and generalization ability of our algorithm in the face of the presence of long-tail distributions in the data set. , the experimental results are summarized in the table below

Performance of the proposed CLIP-BEVFormer algorithm model on long-tail problems

It can be seen from the experimental results in the above table that the nuScenes data set shows a huge imbalance in the number of categories. Some categories such as (construction vehicles, buses, motorcycles, bicycles, etc.) account for a very low proportion, but for The proportion of cars is very high. We evaluate the perceptual performance of the proposed CLIP-BEVFormer algorithm model on feature categories by conducting relevant experiments with long-tail distributions, thereby verifying its processing ability to solve less common categories. It can be seen from the above experimental data that the proposed CLIP-BEVFormer algorithm model has achieved performance improvements in all categories, and in categories that account for a very small proportion, the CLIP-BEVFormer algorithm model has demonstrated obvious substantive performance Improve.

Considering that autonomous driving systems in real environments need to face problems such as hardware failures, severe weather conditions, or sensor failures that are easily caused by man-made obstacles, we further experimentally verified the robustness of the proposed algorithm model. Specifically, in order to simulate the sensor failure problem, we randomly blocked the camera of a camera during the inference process of the model, so as to simulate the scene where the camera may fail. The relevant experimental results are shown in the table below

Robustness experimental results of the proposed CLIP-BEVFormer algorithm model

Robustness experimental results of the proposed CLIP-BEVFormer algorithm model

It can be seen from the experimental results that, no matter under the tiny or base model parameter configuration, the CLIP-BEVFormer algorithm model we proposed is always better than the BEVFormer baseline model with the same configuration, which verifies that our algorithm model can simulate sensor failure situations. superior performance and excellent robustness.

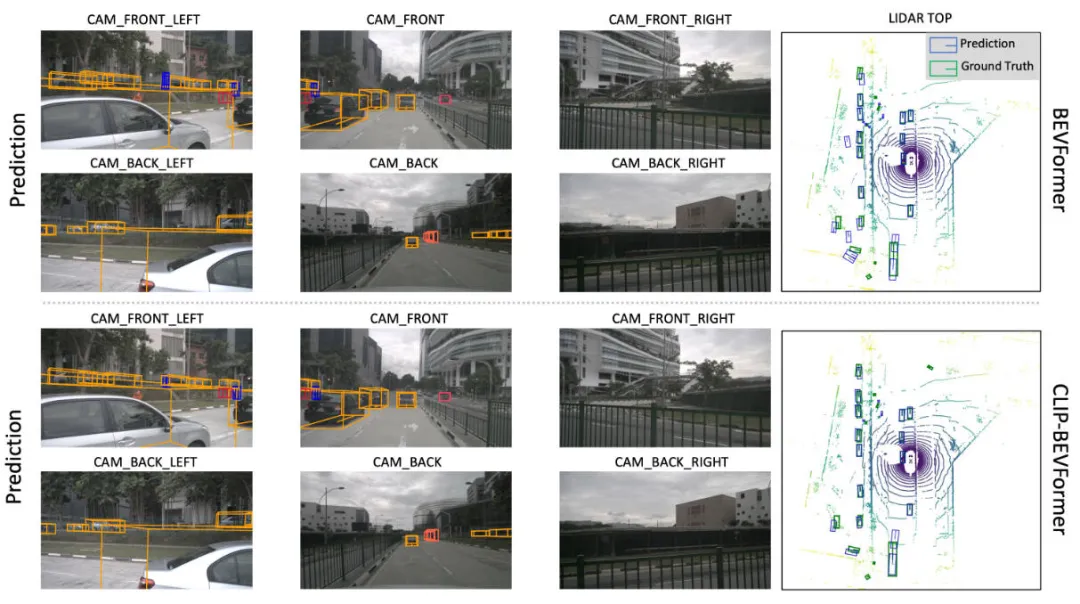

Qualitative analysis part

The figure below shows the visual comparison of the perception results of our proposed CLIP-BEVFormer algorithm model and the BEVFormer algorithm model. It can be seen from the visual results that the perception results of the CLIP-BEVFormer algorithm model we proposed are closer to the true value target, indicating the effectiveness of the true value BEV feature generation module and the true value target query interaction module we proposed.

Visual comparison of the sensing results of the proposed CLIP-BEVFormer algorithm model and the BEVFormer algorithm model

in conclusion

In this article, in view of the lack of display supervision in the process of generating BEV feature maps in the original BEVFormer algorithm and the uncertainty of interactive query between Object Query and BEV features in the Decoder module, we proposed the CLIP-BEVFormer algorithm model and started from the algorithm. Experiments were conducted on the model's 3D perception performance, target long-tail distribution, and robustness to sensor failures. A large number of experimental results show the effectiveness of the CLIP-BEVFormer algorithm model we proposed.