The world's top multi-modal large model is open source! It’s Zero One Thousand Things again, it’s Kai-Fu Lee again

Leading the authoritative lists in both Chinese and English, Kai-Fu Li zeroed in on the multi-modal large model answer sheet!

It is less than three months since the release of its first open source large models Yi-34B and Yi-6B.

The model is called Yi Vision Language (Yi-VL) and is now officially open source to the world.

Both belong to the same series and also have two versions:

Yi-VL-34B and Yi-VL-6B .

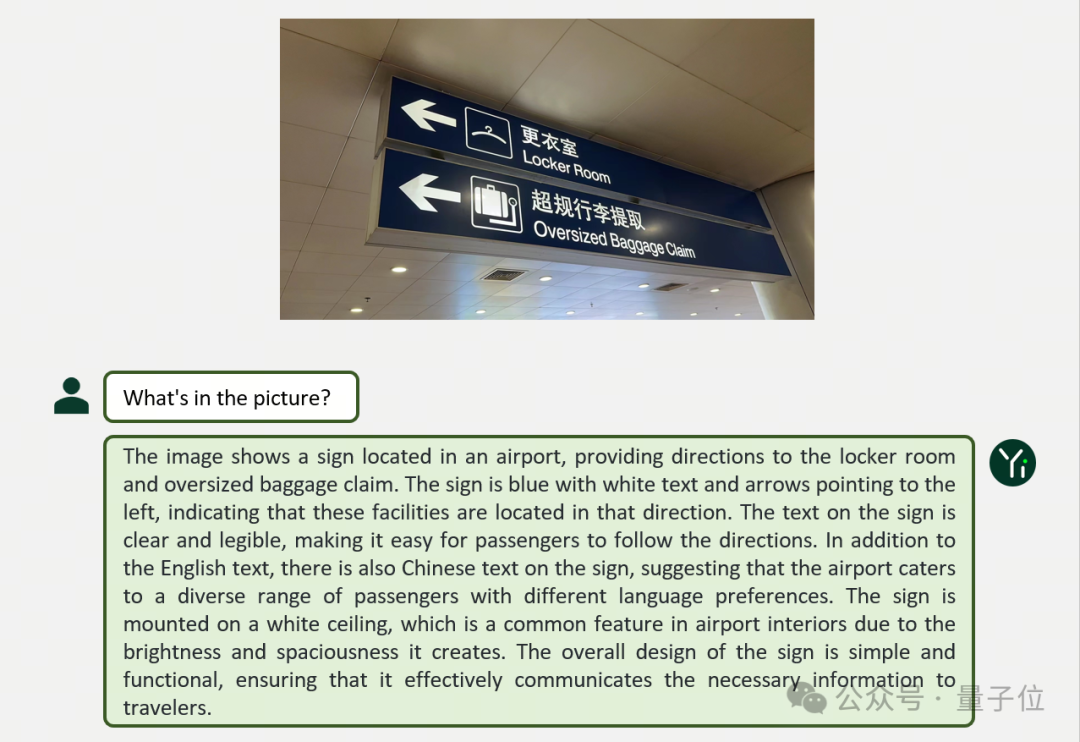

Let’s first look at two examples to experience Yi-VL’s performance in diverse scenarios such as graphic and text dialogues:

Yi-VL made a detailed analysis of the entire picture, not only explaining the content on the sign, but even taking care of the "ceiling".

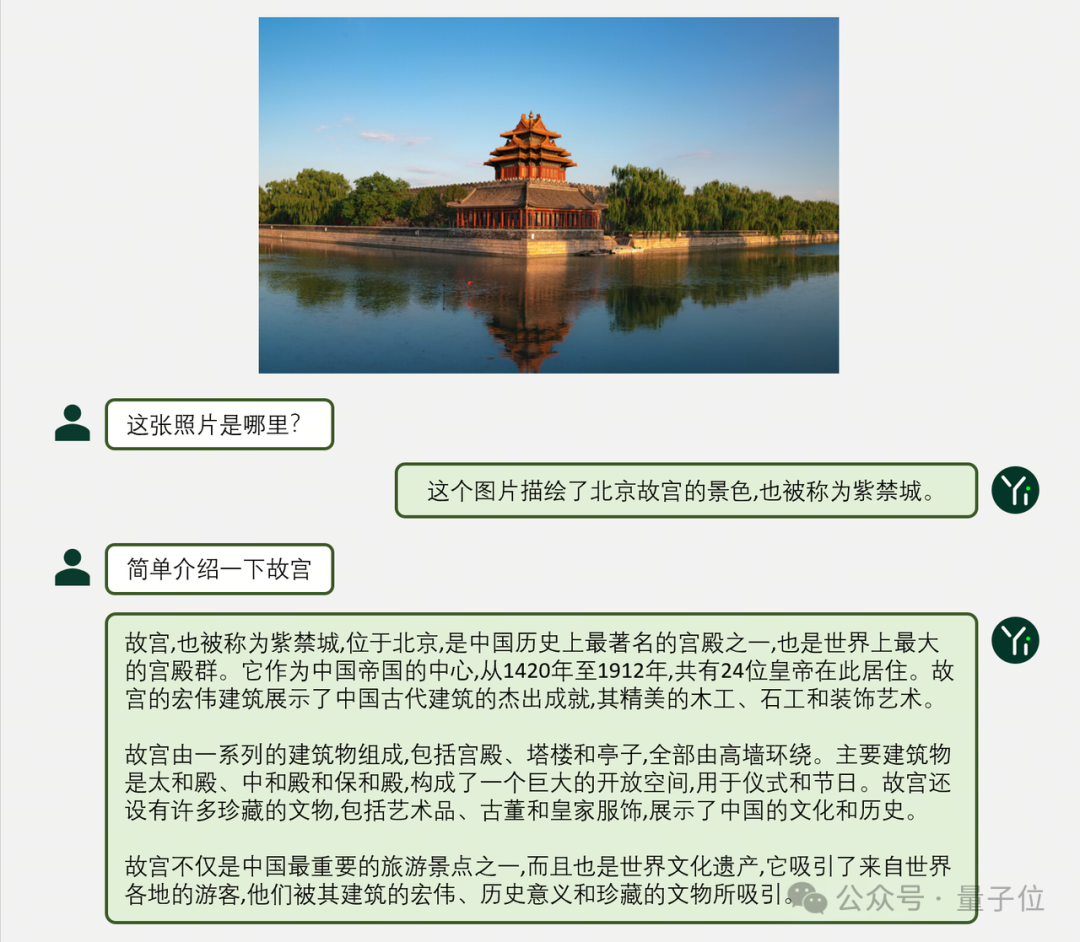

In Chinese, Yi-VL can also express clearly and methodically accurately:

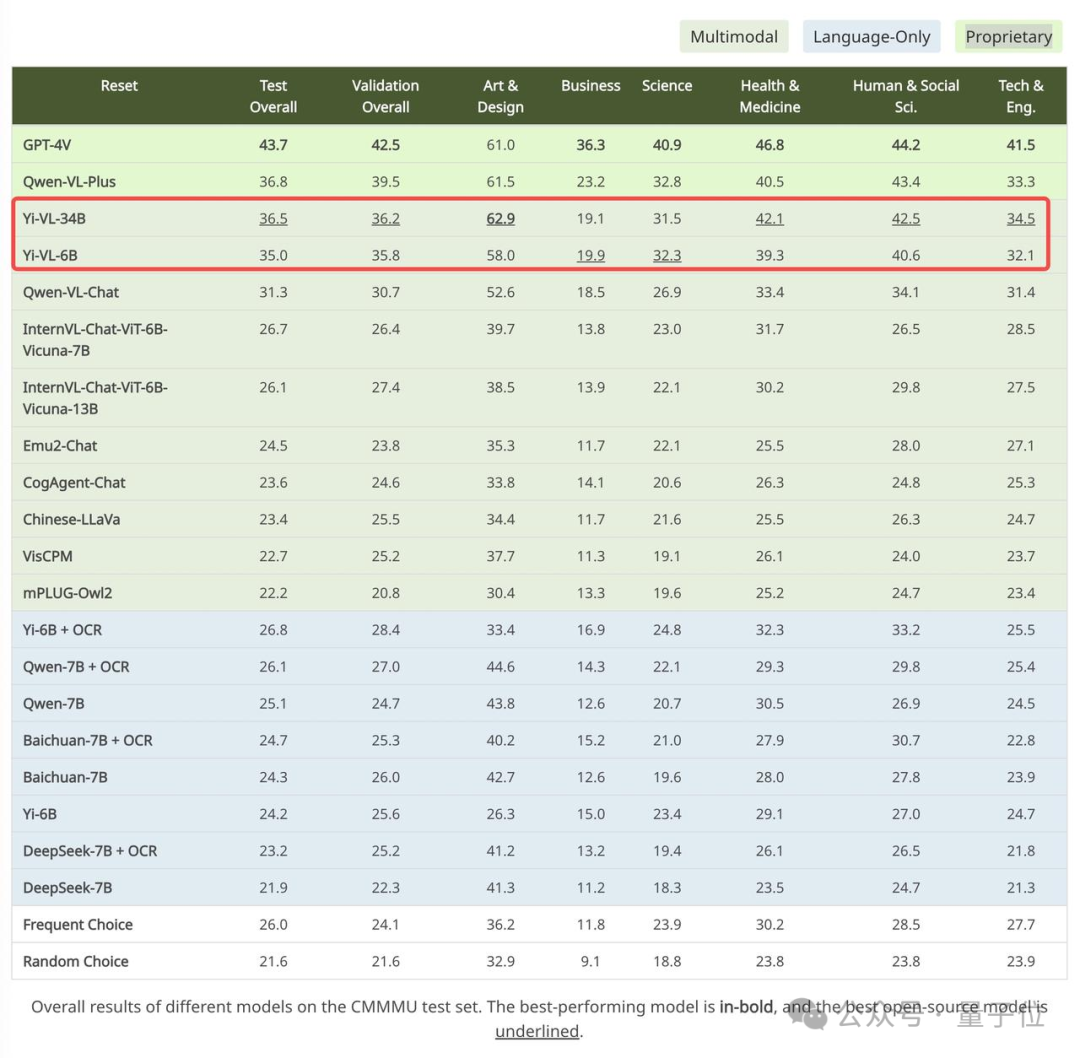

In addition, the official also gave out the test results.

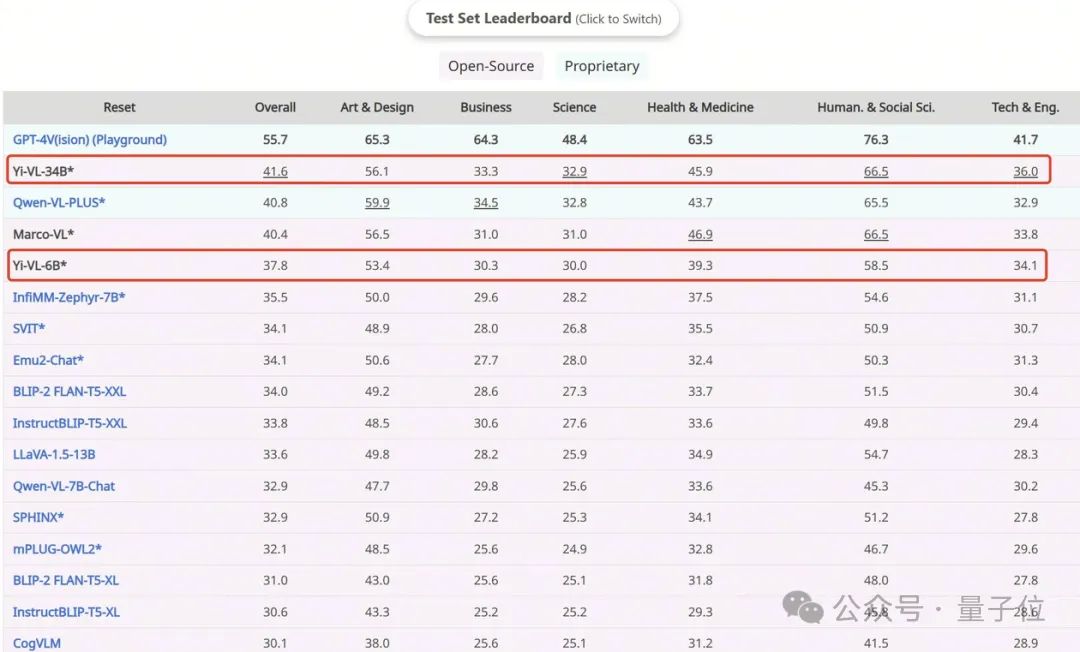

Yi-VL-34B has an accuracy of 41.6% on the English data set MMMU , second only to GPT-4V with an accuracy of 55.7%, surpassing a series of multi-modal large models.

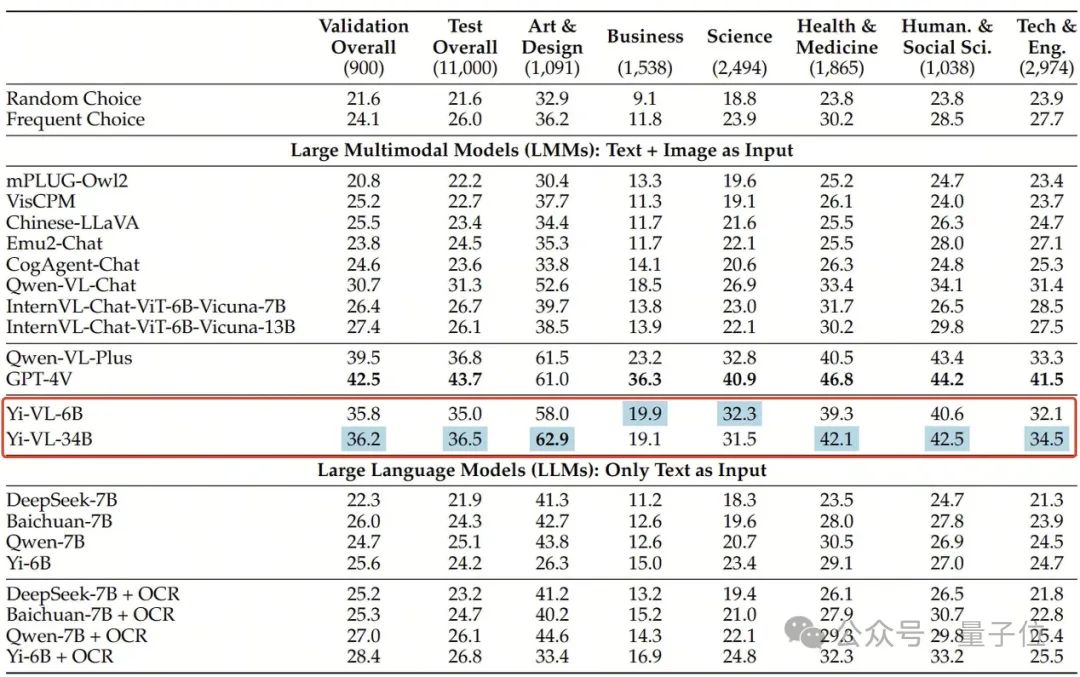

On the Chinese data set CMMMU, Yi-VL-34B has an accuracy of 36.5% , which is ahead of the current cutting-edge open source multi-modal models.

What does Yi-VL look like?

Yi-VL is developed based on the Yi language model. You can see the powerful text understanding capabilities based on the Yi language model. You only need to align the pictures to get a good multi-modal visual language model - this is also the core of the Yi-VL model. One of the highlights.

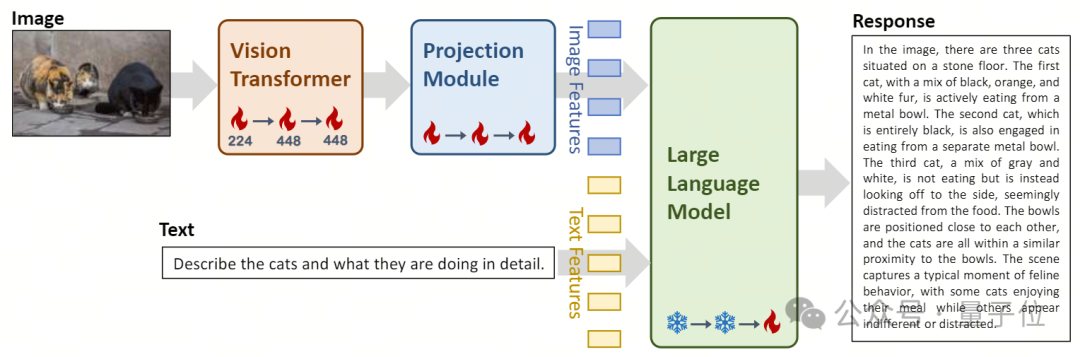

In terms of architectural design , the Yi-VL model is based on the open source LLaVA architecture and contains three main modules:

- Vision Transformer (ViT for short) is used for image encoding. It uses the open source OpenClip ViT-H/14 model to initialize the trainable parameters and learns to extract features from large-scale "image-text" pairs, so that the model has the ability to process and understand images. .

- The Projection module brings the ability to spatially align image features and text features to the model. This module consists of a Multilayer Perceptron (MLP) containing layer normalizations . This design allows the model to more effectively fuse and process visual and text information, improving the accuracy of multi-modal understanding and generation.

- The introduction of Yi-34B-Chat and Yi-6B-Chat large language models provides Yi-VL with powerful language understanding and generation capabilities. This part of the model uses advanced natural language processing technology to help Yi-VL deeply understand complex language structures and generate coherent and relevant text output.

△Caption: Yi-VL model architecture design and training method process overview

In terms of training methods , the training process of the Yi-VL model is divided into three stages, aiming to comprehensively improve the model's visual and language processing capabilities.

In the first stage, a 100 million “image-text” paired data set is used to train the ViT and Projection modules.

At this stage, the image resolution is set to 224x224 to enhance ViT’s knowledge acquisition capabilities in specific architectures while achieving efficient alignment with large language models.

In the second stage, the image resolution of ViT is increased to 448x448, making the model better at recognizing complex visual details. About 25 million "image-text" pairs are used in this stage.

In the third stage, the parameters of the entire model are opened for training, with the goal of improving the model's performance in multi-modal chat interactions. The training data covers diverse data sources, with a total of approximately 1 million "image-text" pairs, ensuring the breadth and balance of the data.

The 01Wanyuan technical team has also verified that it can use other multi-modal training methods such as BLIP, Flamingo, EVA, etc. to quickly train high-efficiency image understanding and smooth graphic and text dialogue based on the powerful language understanding and generation capabilities of the Yi language model. multimodal graphic model.

The Yi series of models can be used as a base language model for multimodal models, providing a new option for the open source community. At the same time, the zero-one-things multi-modal team is exploring multi-modal pre-training from scratch to approach and surpass GPT-4V faster and reach the world's first echelon level.

Currently, the Yi-VL model is open to the public on platforms such as Hugging Face and ModelScope. Users can personally experience the performance of this model in diverse scenarios such as graphic and text dialogues.

Beyond a series of multimodal large models

In the new multi-modal benchmark MMMU, both versions Yi-VL-34B and Yi-VL-6B performed well.

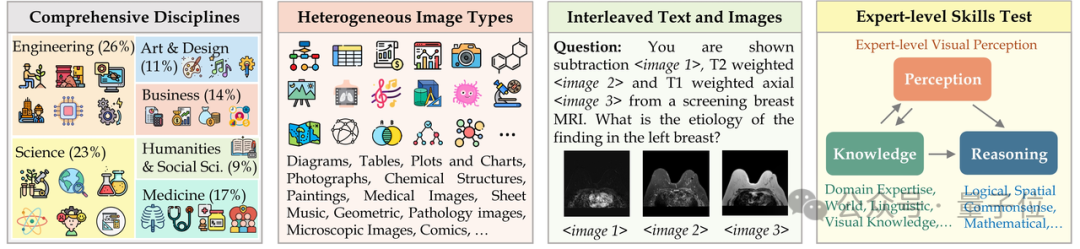

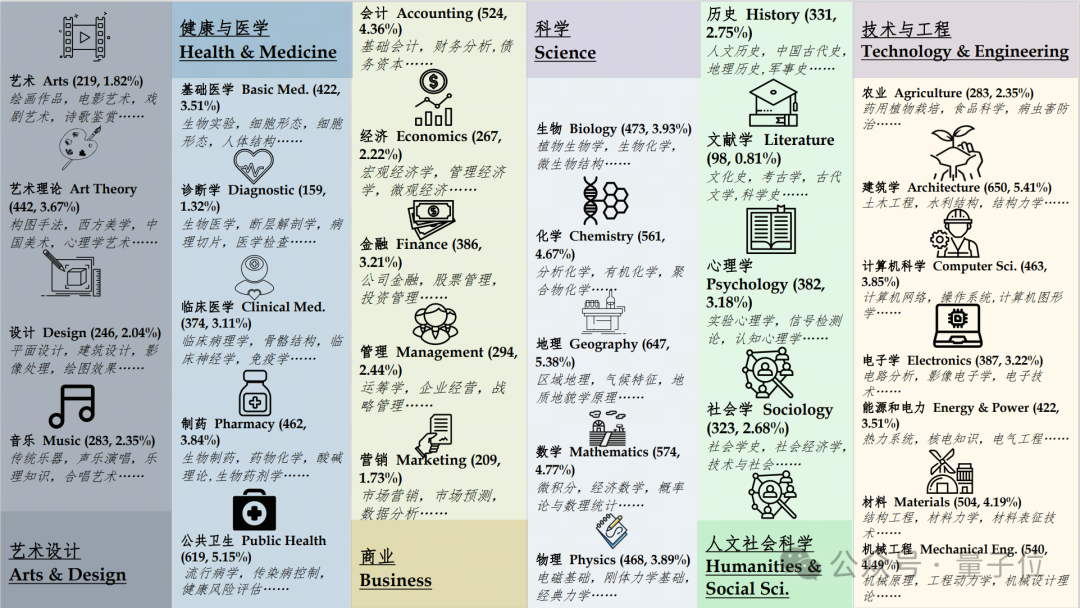

The MMMU (full name Massive Multi-discipline Multi-modal Understanding & Reasoning) data set contains 11,500 subjects from six core disciplines (art and design, business, science, health and medicine, humanities and social sciences and technology and engineering) , involving highly heterogeneous image types and intertwined text-image information, placing extremely high demands on the model's advanced perception and reasoning capabilities.

Yi-VL-34B successfully surpassed a series of multi-modal large models with an accuracy of 41.6% on this test set, second only to GPT-4V (55.7%) , demonstrating strong interdisciplinary knowledge understanding and application ability.

Similarly, on the CMMMU data set created for the Chinese scene, the Yi-VL model shows the unique advantage of "understanding Chinese people better".

CMMMU contains about 12,000 Chinese multi-modal questions derived from university exams, tests and textbooks.

Among them, GPT-4V has an accuracy of 43.7% on this test set, followed by Yi-VL-34B with an accuracy of 36.5%, which is ahead of the current cutting-edge open source multi-modal models.

Project address:

[1]https://huggingface.co/01-ai

[2]https://www.modelscope.cn/organization/01ai